The rise of artificial intelligence across enterprise workflows, customer experiences, and national-security systems makes regulation unavoidable. For US-based engineering leaders, AI regulation is no longer a distant policy issue—it’s a strategic concern that will shape how companies design, deploy, and monetize AI systems going forward. This article shows where the major regulatory lines are being drawn, how they affect engineering and product teams, and how companies can turn compliance into a competitive edge without undermining velocity.

Why Regulation Matters—And Why Now

Adoption of artificial intelligence (AI) across enterprises surged in 2024, but only a small fraction of companies have realized meaningful returns. According to a recent study by Boston Consulting Group (BCG), 74% of companies that implemented AI programs are still struggling to scale them into lasting value; only 26% have moved beyond proofs-of-concept into measurable business impact.

That gap reflects more than technical challenges. It exposes a deeper issue: Companies often built AI in ad-hoc ways, without governance or accountability, a pattern that complicates efforts to regulate AI responsibly. As regulatory pressure grows—especially for high-impact AI applications—those informal practices will become liabilities.

Key business risks now accelerating attention among senior leaders:

- Compliance risk. New legislation imposes obligations, with penalties for non-compliance.

- Market access. For companies operating globally, meeting regional regulatory requirements becomes a prerequisite, especially in jurisdictions such as the EU.

- Product design constraints. AI features deemed “high-risk” may face restrictions or require additional safeguards.

- Brand and reputation. Public trust in AI remains fragile, and regulatory violations can cause lasting damage as artificial intelligence systems become more embedded in daily life.

In other words: regulation isn’t just a cost—it’s becoming a baseline and a differentiator as AI regulation shapes how companies compete.

The Global Regulatory Landscape

The EU AI Act

The European Union AI Act entered into force on August 1, 2024. It adopts a risk-based classification of AI systems and applies obligations accordingly—from minimal requirements for low-risk tools to strict governance and transparency demands for high-risk systems.

Here’s the compliance timeline:

- Feb 2, 2025 – Prohibited practices (unacceptable risk AI systems) and AI-literacy obligations become effective.

- Aug 2, 2025 – Rules governing general-purpose AI (GPAI) models, reporting, confidentiality, and governance are enforced.

- Aug 2, 2026 – Most provisions of the Act—including transparency, oversight, and safety requirements—come into force.

- Aug 2, 2027 – Obligations for high-risk AI systems listed in Annexes become binding.

For high-risk AI systems—e.g., anything with biometric identification, credit scoring, employment screening, or critical decision-making—requirements will include: documentation, human oversight, data governance, traceability, robustness testing, and explainability.

The U.S. Picture: Frameworks, Not (Yet) a Federal AI Act

At the federal level, the U.S. does not have a comprehensive AI-specific legislation. But regulatory momentum is rising:

- The National Institute of Standards and Technology (NIST) publishes the NIST AI Risk Management Framework which many organizations treat as a de facto standard.

- The White House AI Bill of Rights outlines principles for safe and transparent deployment of AI applications.

- Federal government agencies—such as those enforcing privacy or consumer protection laws—are increasingly scrutinizing biased, discriminatory, or deceptive AI practices.

On top of that, state-level laws have begun filling the gaps. For example:

- In 2024, Colorado Artificial Intelligence Act became the first U.S. state law requiring disclosure whenever consumers interact with AI.

- Other states such as California, Utah and others are considering or enacting laws focused on bias, fairness, and transparency in automated decision systems.

That patchwork complicates compliance for companies deploying AI across multiple states. Many industry leaders are calling for a federal AI moratorium or a unified national standard to avoid conflicting rules.

International Standards and Emerging Norms

Beyond laws, companies should watch standards and principles set by international organizations. For example:

- The Organisation for Economic Co‑operation and Development (OECD) promotes AI principles around human rights, transparency, and accountability.

- The voluntary guidelines from bodies such as the IEEE for ethically aligned design influence expectations from regulators and customers alike.

These often shape how regulators interpret formal rules, influencing how AI legislation evolves, and creating a roadmap for “responsible AI” that goes beyond compliance.

What This Means for Engineering and Product Leaders

Regulation may feel abstract—but its effects penetrate deeply into delivery cycles, architecture decisions, and governance. Here are some of the most important implications for engineering teams.

High-Risk Use Cases Need Careful Planning

Any AI application deemed “high risk”—such as facial recognition, credit-scoring models, employment-related decision tools, automated decision systems, or systems impacting public safety, is under scrutiny in state AI regulation. These applications will have significantly increased compliance overhead—especially as state AI regulation evolves. That includes documentation, audits, human-in-the-loop, testing for bias, explainability, data governance, and traceability.

Even if you’re a U.S. company not operating in the EU, using third-party AI tools whose output touches EU users can trigger compliance obligations.

Data and MLOps Pipelines Must Be Built with Governance in Mind

AI systems rarely operate in isolation. Data pipelines, training data, model retraining, version control, logging, and monitoring—all need to meet compliance standards to ensure the responsible development of AI innovation at scale, or if regulators audit your systems.

Expect increased scrutiny on:

- Data provenance and training-data governance (especially for generative AI and large models).

- Security and robustness of models used in sensitive areas.

- Logging of model inputs and outputs, especially for auditability.

- Human review workflows and fallback processes when AI decisions affect people.

Cross-Functional Governance Becomes a Business Necessity

AI compliance isn’t just a technical issue. It demands collaboration between engineering, legal, compliance, product, and operations. Building a governance framework early—before AI features roll out—reduces risk and speeds up compliance.

That means:

- Giving compliance and risk teams a seat at the planning table.

- Defining ethical principles around AI use.

- Creating standard operating procedures (SOPs) for high-risk AI deployments.

- Assigning accountability—who signs off on data use, oversight, model changes, audits.

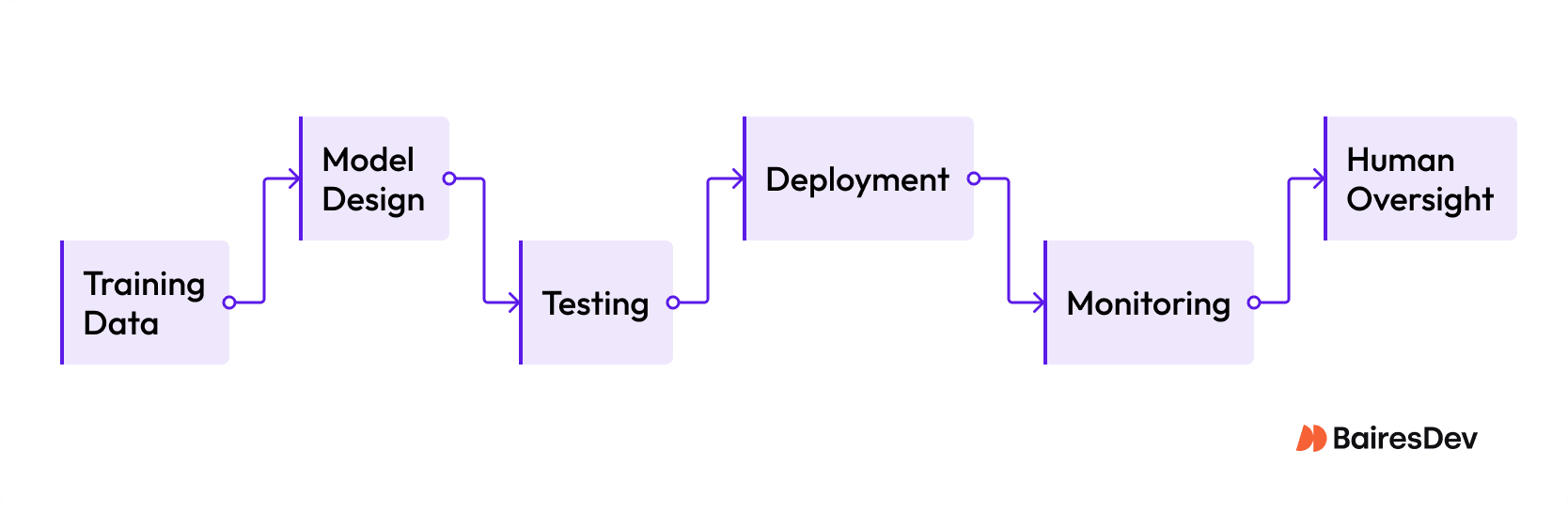

Taken together, these obligations form a governance lifecycle that touches every part of an artificial intelligence system’s development and operation. For engineering leaders, visualizing that lifecycle helps clarify where compliance requirements show up, who owns each activity, and how oversight fits into the broader release process.

Turning Compliance into Competitive Advantage

Rather than viewing regulation as a drag on velocity, companies that build compliance scaffolding now can gain advantage in several ways: quality, trust, and readiness.

- Faster access to regulated markets. Firms equipped to meet EU rules from day one save months or quarters of rework when expanding internationally.

- Better risk management. Documented, well-governed AI systems resist regulatory, reputational, and legal risk better.

- Stronger customer trust. Ethical and transparent AI application—especially in sensitive domains such as finance, healthcare, hiring, or security—becomes a selling point.

- More scalable infrastructure. Governance-ready architecture makes it easier to audit and scale AI across products, particularly when integrating automated systems without repeated rewrites.

What Leading Organizations Are Doing Differently

Companies that succeed at AI scale tend to invest disproportionately in people and processes rather than just algorithms. According to BCG’s data, “AI leaders” allocate roughly 70% of their resources to organizational and process change, 20% to data infrastructure, and only 10% to algorithmic development—a reversal of the “tech-first” mindset that dominates early AI adoption.

In regulated or high-stakes industries (healthcare, finance, security, public services), top-tier teams embed governance, compliance, and security engineers into core delivery squads to ensure AI tools meet evolving compliance requirements—not as an afterthought or separate compliance silo.

Practical Playbook: What Engineering Leaders Should Do Now

Here’s a prioritized set of actions for teams that build or deploy AI—whether internal or customer-facing—especially when some use cases may be considered high risk.

| Phase | Action | Business Impact / Purpose |

| Inventory | Map current and planned AI applications; classify by risk (minimal, limited-risk, high-risk). | Enables early identification of areas needing governance or redesign. |

| Governance Setup | Involve compliance, legal, privacy, and security teams from the start; define ethical principles and SOPs. | Avoids costly rework, ensures readiness for audits, and reduces fall-back risk. |

| Data and MLOps Hardening | Build data-governance pipelines, document data provenance, enable logging, versioning, monitoring. | Ensures traceability and robustness; readies models for certification if needed. |

| Human Oversight and Transparency | Design human-in-the-loop or human-review mechanisms for high-risk decisions; implement explainability where required; disclose AI use to end users when mandated. | Reduces liability, builds trust, meets transparency obligations. |

| Vendor and Supply-Chain Risk Management | If using third-party AI tools or models, require vendor compliance declarations, audits, and documentation. | Clarifies accountability, avoids blind spots when regulations apply extraterritorially. |

| Testing and Audit Readiness | Include bias testing, robustness checking, logging, output review, “what-if” scenario analysis before production deployment. | Surface risk early, allow corrective action before public release or regulation triggers enforcement. |

| Ongoing Monitoring and Governance Lifecycle | Assign ownership for compliance oversight, monitor legal developments (state laws, federal government bills, international norms), update documentation and model governance with each release. | Keeps AI operations aligned with evolving regulation and preserves market access as laws change. |

In regulated domains such as healthcare, credit, law enforcement, or public services, some of these steps aren’t optional—they’re prerequisites for deploying trustworthy AI in regulated environments.

Comparing EU vs. U.S. and State-Level Approaches

| Feature | EU (AI Act) | U.S. Federal / State-Level |

| Regulatory Scope | Comprehensive, binding across all Member States; applies to providers and deployers. | Fragmented—no comprehensive federal government AI legislation currently. Sector-based and state-level laws. |

| Compliance Deadlines | Phased enforcement through 2025–2027 for general-purpose and high-risk systems. | Ongoing; emerging requirements around bias, transparency, consumer disclosures, depending on state laws and sector regulators. |

| Enforcement and Penalties | Large fines for non-compliance; up to 7% of global annual turnover or specified euro amounts. | Enforcement via existing regulators (privacy, consumer protection, civil-rights); penalties depend on statute and enforcement body. |

| Governance Model | Requires documentation, human oversight, traceability, transparency, risk-based classification. | Varies widely; companies often follow frameworks (e.g., NIST) voluntarily or in response to sector-specific regulation or litigation risk. |

What Engineering Leaders Should Avoid—Common Pitfalls

- Treating “AI compliance” as just a legal checkbox. Without integrating governance into architecture, you end up retrofitting—costly, risky, and slow.

- Assuming all AI systems are minimal risk. As use cases expand (e.g., face recognition, credit evaluation, medical diagnosis), some may be “considered high risk” under regulation.

- Viewing regulation as a barrier to innovation. That mindset leads to secretive, siloed development; it becomes a liability when law or public scrutiny catches up.

- Ignoring supply-chain and vendor risk. Third-party tools or models may carry their own compliance burdens—or introduce unseen liability.

- Delaying governance until after success. The “move fast, break things” approach rarely survives compliance scrutiny, especially in regulated industries.

Staying Ahead: Building Resilient AI Programs

Regulation is not about slowing technological progress—it’s about enabling safe, scalable, and trustworthy innovation. Companies that embrace that mindset now have a distinct advantage.

Organizations with robust engineering teams, strict governance practices, and a disciplined release process are positioned to launch AI features faster, more reliably, and with less risk—whether for internal systems or customer-facing products.

That readiness pays off when regulations catch up. Even if a law like the EU AI Act doesn’t apply today, having data pipelines, audit logs, and ethical safeguards in place means you’re ready—not scrambling.

In that sense, preparing for AI regulation is like building resiliency: it protects against downside risk while setting the stage for long-term competitiveness and trust.

Positioning for the Next Wave

As we move deeper into 2025 and beyond, regulatory frameworks will evolve, enforcement will ramp up, and expectations around “responsible AI” will become the norm—not the exception.

Smart leaders already recognize this. They see compliance not as a hurdle, but as a lever to build scalable, durable AI infrastructure that adapts to new AI advances, can survive audits, scale across geographies, and win trust from customers and partners.

If your organization is building or scaling AI—especially in regulated or high-stakes domains—now is the moment to embed governance, data hygiene, transparency, and oversight into your pipeline. Those steps aren’t optional anymore. They’re foundational.