Executive Summary: This article argues that AI-assisted development has exposed a fundamental flaw in how software teams manage code review. As AI tools generate code at volumes human reviewers cannot meaningfully process, the solution is to move review upstream, from code to intent. Ayman Shoukry proposes a two-tier model: human review of requirements and specifications, and agent review of generated code. Adopting this model early will hold a significant delivery advantage.

As AI generates more of our code, the definition of what deserves human review must fundamentally change. Without that shift, the entire delivery pipeline will collapse under its own weight.

We have been here before.

When compilers first emerged, they did something radical: they took human-readable instructions and generated machine code nobody was expected to read or review line by line. Engineers had to extend trust to a tool they couldn’t fully verify. They tested the behavior, validated the outcomes, and trusted that the compiler, once proven, would do its job correctly. The industry made that bargain repeatedly, and for the most part it worked.

Now we’re being asked to make it again at a much larger scale, across much more of the codebase, but the industry doesn’t quite know how to respond. The result is a bottleneck hiding in plain sight. Code review has become the choke point in AI-augmented software development, and the practices managing it were designed for a world that no longer exists.

This piece examines where review energy needs to go instead — what moves upstream, what gets delegated to agents, and what both shifts mean for engineering culture.

The Review Bottleneck AI Has Exposed

The promise of AI-assisted development was velocity. Tools like GitHub Copilot, Cursor, and a growing ecosystem of agentic coding systems can now generate not just snippets but entire features, services, and test suites in the time it once took a developer to write a single function signature. The output of a single engineer has multiplied.

The review process hasn’t.

We still route AI-generated code through the same pull request queues, the same human reviewers, the same synchronous approval gates designed for a world where a developer wrote a few hundred lines a day. When an agent can produce thousands of lines per hour, having a human read every one of them isn’t a quality gate, it’s a traffic jam. Senior engineers, already stretched thin, are now being asked to review volumes of code they cannot meaningfully process. Review quality drops. Approvals become rubber stamps. The bottleneck has become invisible and dangerous. The system still appears to function; PRs move, approvals happen. But the quality gate has quietly collapsed.

This is a conceptual problem that a better IDE won’t fix. We are applying a decades-old quality model to a modern production reality. To understand where this leads, it helps to look honestly at where we already are.

A Brief History of Trusted Code Generation

The compiler was the original code generator. It transformed human intent into machine instructions, and we trusted it entirely. No engineer reviews the object code their C++ compiler emits. That trust was earned through formal verification, rigorous testing, and decades of production hardening.

GUI frameworks and visual designers came next. WinForms, WPF XAML generators, MFC wizards — all produced significant volumes of code that shipped to users without human review. The trust model was vendor accountability: Microsoft and others stood behind their toolchains. If a bug existed in the generated code, it was their bug to fix.

ORMs, scaffolding tools, and code generators continued the pattern. Rails scaffolding, Entity Framework migrations, gRPC stub generators, OpenAPI client generators — vast quantities of production code created by automated tools, largely exempt from line-by-line review. Teams reviewed the schema, the contract, the interface. Not the generated artifact.

In each case, the industry reached the same conclusion through pragmatism. Review the intent, trust the generator for the implementation. The abstraction layer rose, and human review focused on the layer where humans were actually making decisions.

AI code generation is the logical continuation of this pattern. The question is not whether we will eventually extend trust to AI-generated code. We already are, informally, in practice, whether we admit it or not. What needs answering is how we build that trust systematically, and what we redirect our review energy toward when we do.

What that redirection looks like in practice is where the argument gets uncomfortable.

Engineering Teams Need to Review Intent, Not Just Code

The uncomfortable truth at the center of this transition is that the most consequential decisions in software development are not being made in the code. They are being made upstream of it.

What problem are we solving? For whom? Under what constraints? With what tradeoffs? What does “correct” mean in this context? These are requirements-level questions, and they are currently expressed in the worst possible medium for precision: natural language, scattered across Jira tickets, Confluence pages, Slack threads, and verbal conversations that nobody recorded.

When a developer writes code today, they are performing an act of translation. They are converting ambiguous human intent into precise executable logic. The errors in that translation are what code review was designed to catch, but in an AI-augmented world, the AI performs most of that translation. The human’s job is to provide the intent clearly and completely. The errors worth catching have moved upstream.

Today, the most important review a team performs isn’t on a pull request. It’s on the requirements document, the system prompt, the behavioral specification that an agentic system will execute against. That’s already true, and it will only become more so as systems grow more automated. Getting that wrong produces an entire feature built in the wrong direction, at machine speed.

The Requirements IDE: The Tool Engineering Teams Still Need

If requirements are the new source code, they need to be treated with the rigor we give to source code. That means version control, diff tools, linting, testing, and review workflows applied to intent.

The concept isn’t far-fetched. Imagine a collaborative environment where product managers, engineers, and domain experts co-author structured specifications that are precise enough to be executable, yet human-readable enough to be debated and refined. The pieces already exist: structured specification languages, formal modeling tools, AI systems that can reason over natural language requirements and surface inconsistencies. What’s missing is cultural adoption and product integration.

Such an environment would need to support four things:

- Version-controlled intent. Every change to a requirement is tracked, attributed, and reversible. “Why did this feature behave this way?” has an answer that traces back to a specific requirement change, not a mystery commit from six months ago.

- Requirement linting. Automated checks for ambiguity, contradiction, and incompleteness. Much like a compiler warns you that a variable is unused, a requirement linter warns you that a success criterion is unmeasurable or that two requirements conflict.

- Behavioral diffs. When a requirement changes, the system surfaces what downstream behavior that change is expected to affect, before any code is generated or run.

- Requirement review workflows. Structured peer review of the specification before generation begins, rather than after, when changing course is expensive.

The industry has not yet decided that requirements deserve the engineering discipline we give to code, but it will, because the cost of skipping that step is becoming impossible to ignore.

The requirements layer is where human judgment belongs. What happens downstream of it is a different problem, one that agents are better positioned to handle.

Two Tiers of Review: What Humans Own vs What Agents Handle

The future of code review is the correct placement of human judgment, supported by automated agents operating at the levels where humans cannot practically operate.

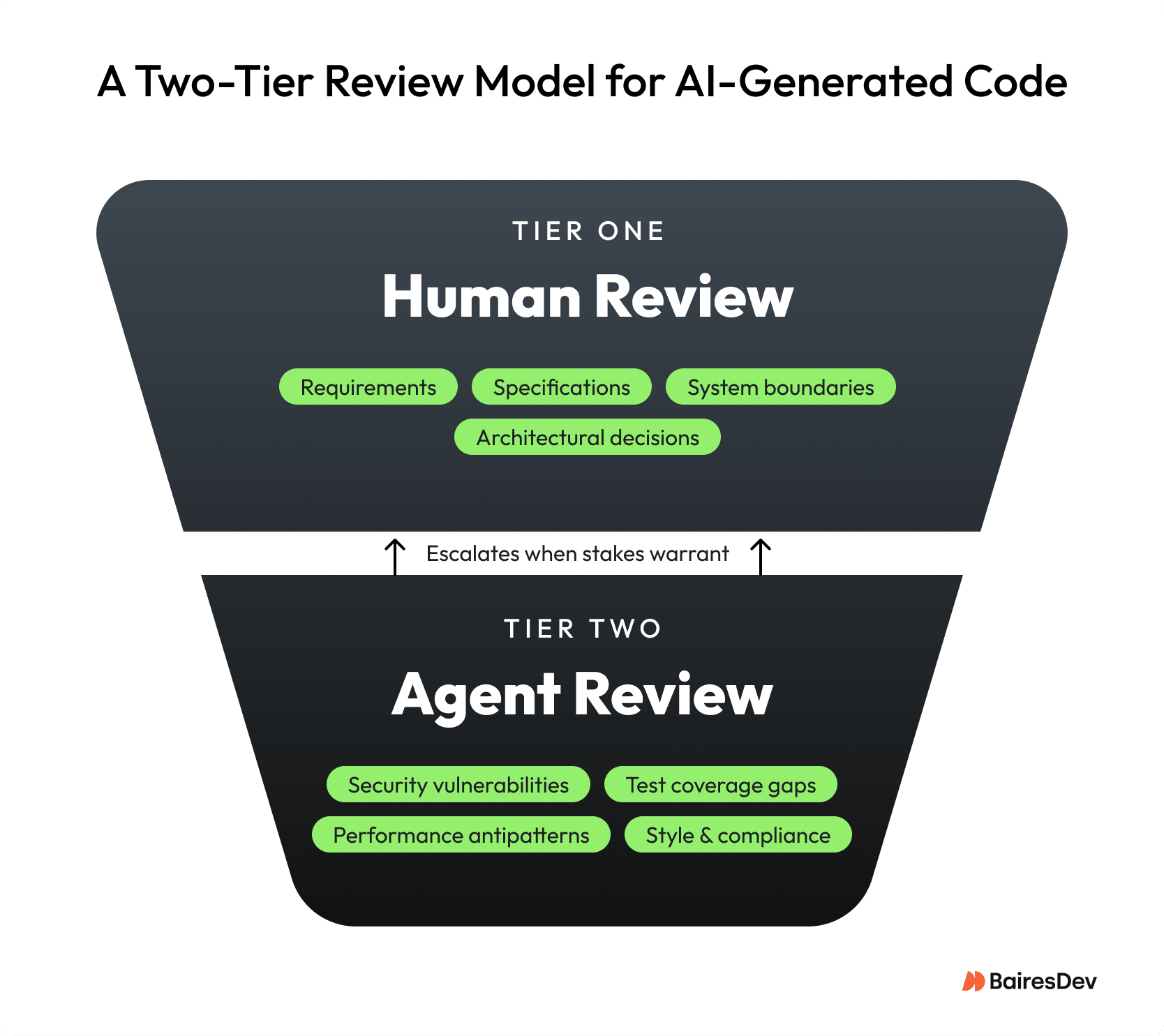

Think of it as a two-tier system.

Tier One: Human Review of Generated Intent

This is where senior engineers, architects, and product leaders spend their most valuable attention. Reviewing requirements, specifications, architectural decisions, and system boundaries, is the layer where the consequences of error are highest and where human judgment is irreplaceable. The reviewed artifact may look more like a structured document or a formal specification than a diff, but the discipline of peer review applies fully.

Tier Two: Agent Review of Generated Code

Agentic code reviewers, operating continuously and at scale, handle what humans cannot. They analyze thousands of lines of generated code for security vulnerabilities, performance antipatterns, test coverage gaps, style consistency, and compliance with architectural constraints. They don’t get tired. They don’t rubber-stamp. They apply consistent rules at a speed and volume no human team can match. Their findings surface as prioritized, actionable signals that escalate to human review only when confidence or stakes warrant it.

The trust model for tier-two code follows the compiler precedent. As AI code generators mature, are tested at scale, and accumulate track records, teams will incrementally extend trust to them. The precedent is already established: compilers, ORM scaffolders, and protobuf generators all earned that trust the same way. This will be an earned trust, calibrated to the specific generator, the specific context, and the specific risk tier of the application.

Reorganizing review around intent rather than implementation doesn’t just change the process. It puts every role in the pipeline on an evolutionary trajectory

What Changes for Engineers and Product Managers

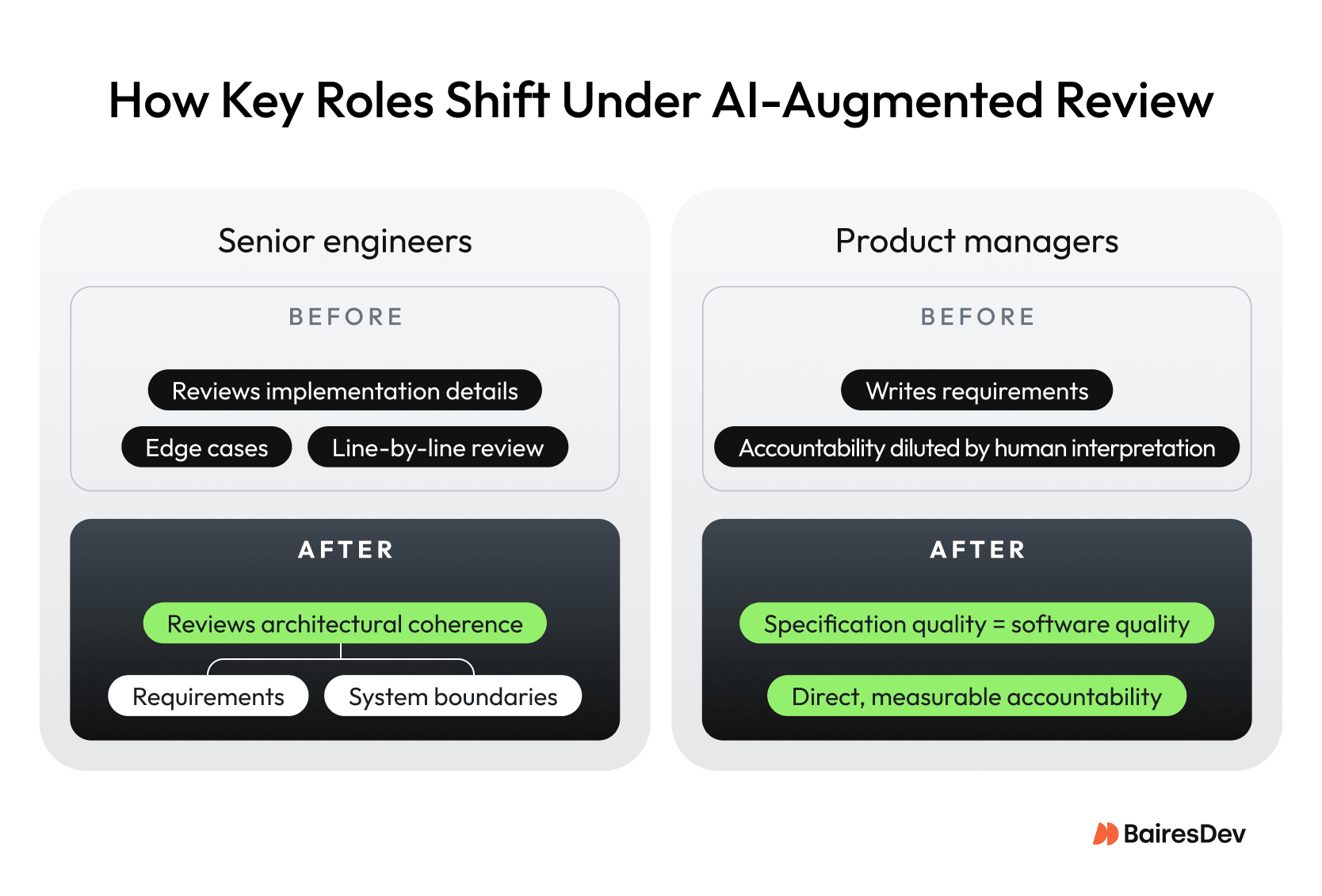

Shifting review upstream redistributes accountability across roles: engineers toward architecture and product managers toward precision.

The role of the senior engineer shifts. Today, a senior engineer’s time in code review is spent reading implementation details — checking whether a junior developer correctly handled an edge case in a sorting algorithm. In the emerging model, that time shifts toward architectural arbitration: reviewing whether a set of requirements is coherent, whether a proposed system boundary makes sense, whether an agent’s architectural choices align with long-term platform strategy. This is, arguably, more valuable work. It also demands broader context and deeper judgment, which means the bar for meaningful senior contribution rises.

The role of the product manager shifts as well. As requirements become executable artifacts that drive generation directly, the precision and quality of product specification becomes a direct determinant of software quality. Not in theory, as it has always been, but measurably and immediately. Product managers who write sloppy requirements won’t see their mistakes diluted through a layer of human interpretation. They will see them faithfully executed at scale. This creates real accountability for specification quality that the industry has long avoided.

The definition of “done” changes too. A feature isn’t done when code passes review. It is done when the requirement that generated it has been validated against the original intent, when the generated code has cleared automated review at tier two, and when any human-escalated flags have been resolved. The review gate moves earlier in the pipeline and becomes more continuous throughout it.

The Trust Curve for AI-Generated Code

We will not flip a switch and stop reviewing AI-generated code. Trust will be built incrementally, domain by domain, risk tier by risk tier, exactly as it was with compilers and frameworks.

Low-stakes, high-volume code (utility functions, data transformation logic, test boilerplate) will earn autonomy first. The feedback loops are fast, the consequences of error are low, and the patterns are well-understood. Agentic review at tier two will be sufficient.

Higher-stakes code (security-sensitive paths, financial transaction logic, healthcare data handling) will require more deliberate trust-building. It will need formal verification, extensive red-teaming of the generator, and regulatory compliance review. Human review at tier one will remain involved longer, not because humans are better at reading code, but because the accountability structures have not yet evolved to delegate that responsibility.

The interesting zone is the middle. It is the broad mass of business logic that is neither trivial nor safety-critical. This is where the shift will happen fastest, and where organizations that develop mature agent-review pipelines will gain the most competitive advantage. Teams that invest now in agentic review infrastructure — the tooling, the policies, the escalation logic — will ship with confidence at a velocity that teams still routing everything through human PR queues cannot match.

What the History of Software Engineering Is Telling Us

The compiler didn’t make software engineers irrelevant. It moved them up the abstraction stack, where they could build things that were previously impossible.

The GUI designer didn’t eliminate the need for thoughtful UI architecture. It eliminated the need to hand-write layout boilerplate, freeing designers to think about interaction rather than coordinate geometry.

Every generation of trusted code generation has followed the same pattern. What was once written by hand becomes a generated artifact. What was once generated becomes a trusted primitive. Human attention moves to the layer above.

We are at the beginning of that transition for application logic itself. The code that an AI agent writes today will, within a decade, be no more reviewed line by line than the output of a C++ compiler is today. What will be reviewed carefully, rigorously, and with deep expertise, is the intent that drives it.

The engineers who thrive in that world are not the ones who can read the most code. They are the ones who can think most clearly about what software should do, express that thinking with precision, and evaluate whether a system’s behavior actually matches the intent behind it.

The review isn’t going away. It’s moving upstream. And upstream is exactly where the most important decisions have always been made.

Key Takeaways

- AI-generated code has made traditional line-by-line code review a bottleneck, not a quality gate.

- The most consequential decisions in software development happen upstream of the code, at the requirements level.

- A two-tier review model — humans reviewing intent, agents reviewing code — reflects how trust in code generation has always been built.

- Product managers bear direct accountability for software quality when requirements become executable artifacts.

- Teams that invest in agentic review infrastructure now will ship at a velocity that human-only PR queues cannot match.