Over the past year, we’ve watched AI settle into the everyday reality of software teams. In previous editions of Dev Barometer, developers talked about experimentation, productivity gains, skepticism, and trust. The questions evolved quickly from whether AI would meaningfully change engineering work to how developers could adapt their workflows without compromising quality.

In Q1 2026, that evolution continues, but it’s maturing. We surveyed 1,329 developers across 61 countries in January 2026, 25% of whom were women and 47% with 8+ years of experience. The signal is clear: confidence is rising. Developers are mostly comfortable using AI. Most see a clear career upside. Many are delegating routine execution and spending more time reviewing, validating, and shaping what AI produces. As AI has pushed engineers closer to the role of auditor and solution designer, it has also influenced their sense of accountability and the baseline skills they believe now define strong engineering.

This edition also brings a sharper lens to women in tech. The data reveals a nuanced shift. Women developers report greater career agency through AI adoption, even as confidence in the fairness of AI-supported workplace tools lags behind overall sentiment. Together, these findings highlight a broader transformation in how advantage is distributed across teams and what it now takes to thrive in an AI-augmented environment. To access the full survey data set, click here.

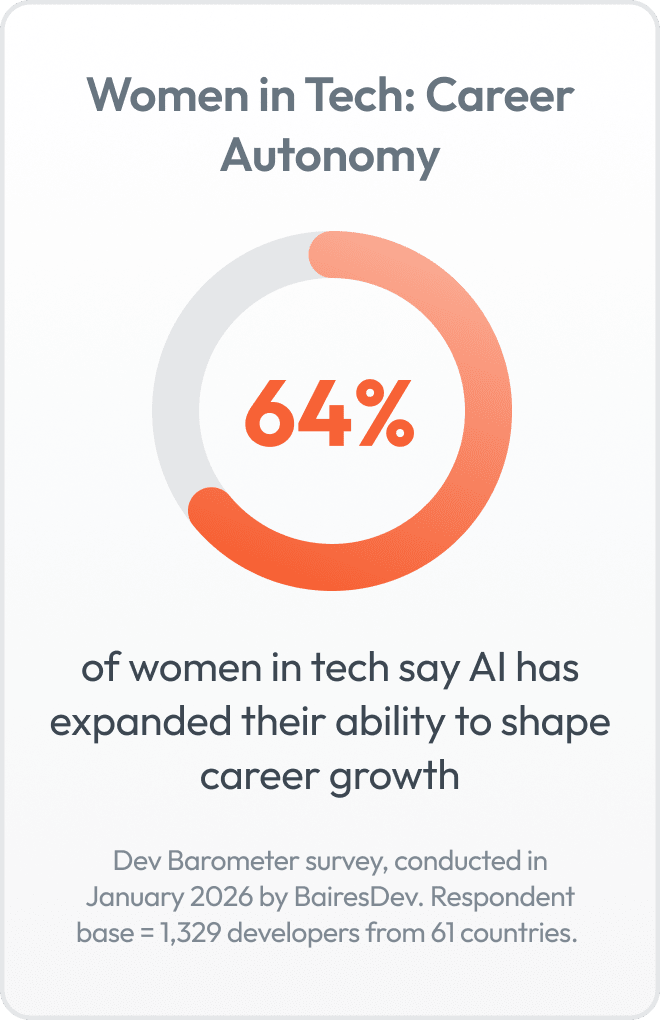

64% of Women Developers Say AI Is Expanding Their Career Agency

Two-thirds of women developers say AI has improved their ability to shape their career direction, and 84% report stronger career momentum linked to AI skills. The professional upside is real and visible in day-to-day work.

For Virginia Moré, a software engineer working on large-scale legacy systems, AI has accelerated how she understands architecture and technical trade-offs. “Before AI, I was focused on implementation. Now I think much more like an architect,” she said. Instead of relying solely on senior mentorship, she uses AI to explore alternatives and test ideas before entering technical discussions. Human validation remains essential, but the path to higher-level reasoning is shorter.

Other women described a similar shift: clearer reasoning, stronger technical arguments, and greater ownership in discussions. That’s what the 64% reflects. Career agency is also about earlier exposure to architectural thinking and more confident participation in technical decisions.

At the same time, that momentum coexists with caution. While 64% say AI improves their ability to shape their careers, confidence in the gender fairness of AI-supported tools,such as performance reviews or task allocation, stands at 56%, compared to 64% among men.

AI is expanding technical agency. Whether that agency converts into long-term advancement depends on the environment in which it operates. This raises the next question: if individual capability is rising, what ultimately determines who benefits most?

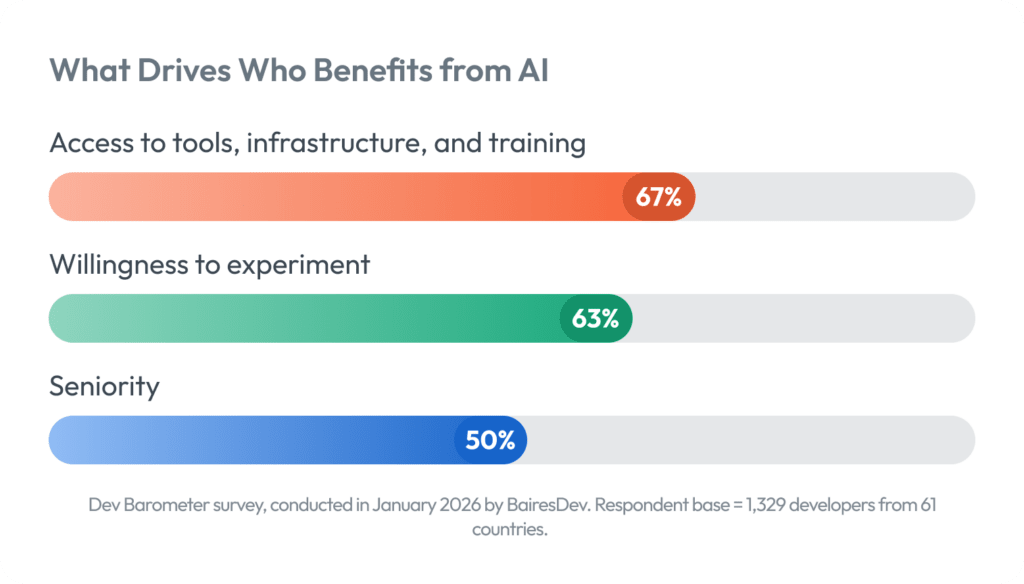

Nearly 7 in 10 Say Access to Tools and Training Matters More Than Seniority

The Dev Barometer Q1 2026 suggests access to tools, infrastructure, and training are the strongest predictor of AI advantage (67%), followed by individual willingness to experiment (63%). Seniority trails behind at 50%.

Among women, we found a different perspective. Access remains the top factor (67%), but organizational culture and team norms (49%) replace seniority (38%) altogether as a key driver of advantage.

Regardless of gender, AI impact is being shaped less by title and more by environment. Developers who have the space, infrastructure, and encouragement to experiment are pulling ahead no matter how many years they’ve been in the field. Engineers describe this as a change in how learning itself happens. Instead of waiting for architectural exposure to come with promotion, AI allows them to simulate higher-level reasoning earlier. But whether that translates into visible advancement depends heavily on team context. Workplace structure is still a priority.

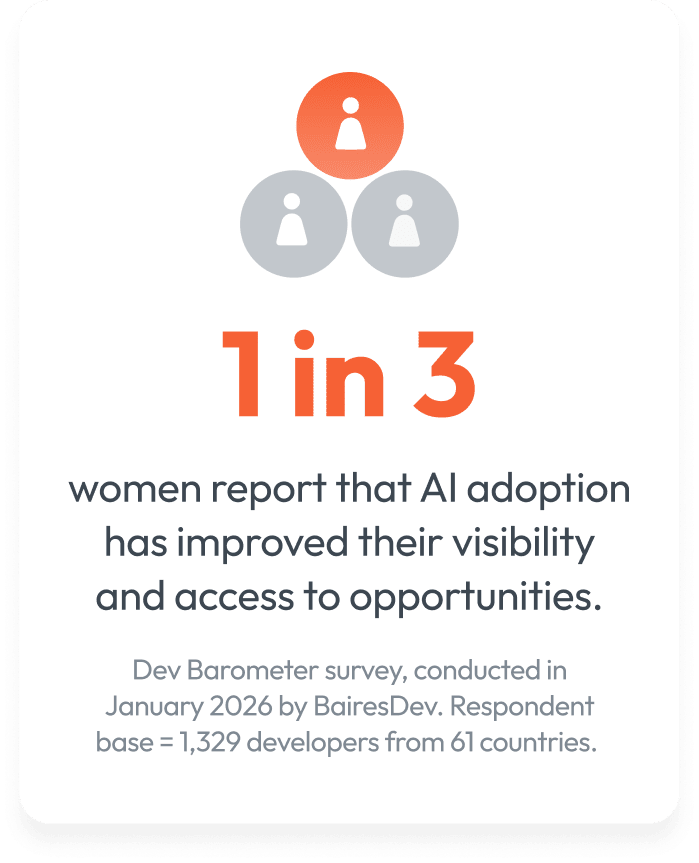

When asked which workplace practices most influence advancement, women point first to structural factors: flexible work policies (36%), transparent promotion criteria (27%), and active sponsorship for high-visibility projects (16%). By contrast, only one in three women (33%) say AI adoption has increased their visibility or access to opportunities, with most describing its impact as neutral or unclear. The signal is consistent: AI may strengthen capability, but advancement still hinges on workplace structure.

Interviews reinforce what the data already suggests: access alone isn’t enough. AI may accelerate learning, but its impact depends on training, clear growth pathways, a team culture that supports experimentation, and the individual curiosity to experiment in the first place.

And as advantage becomes more environmental than hierarchical, responsibility for outcomes is becoming more personal.

Over Half of Developers Say AI Accountability Sits With the Individual

One of the recurring themes from Dev Barometer Q3 2025 was a gap between individual readiness and organizational support. At that time, we reported that companies were still navigating their own AI maturity journeys, experimenting without formal training programs, leaving engineers to adopt and learn on their own.

Fast forward to Q1 2026, and that tension shows up in how accountability is distributed. In this quarter’s survey, 51% of developers say accountability for AI-driven outcomes sits primarily with them. Only 19% view it as a team responsibility, and 11% say ownership remains unclear.

Developers feel confident incorporating AI outputs into their workflows, but when it comes to who is accountable for the results, the final checkpoint still lands squarely with the individual signing off on the work.

Bruno Dantas, one of the engineers we interviewed, described accountability as “multilayered, first with the developer, then with the team.” That layered view reflects how modern engineering often works: collaboration throughout, but clear ownership at the point of delivery.

AI has increased output and accelerated execution, but it has not diluted ownership. In many cases, it has intensified it. Developers are reviewing AI-generated output, validating edge cases, and making judgment calls under delivery pressure. At the same time, governance norms are still evolving. When 11% of respondents say accountability is unclear, it signals that standards and expectations have not fully stabilized across teams. Capability is scaling quickly, but clarity is scaling more gradually.

And that tension becomes most visible when we look at what’s actually limiting developers in practice.

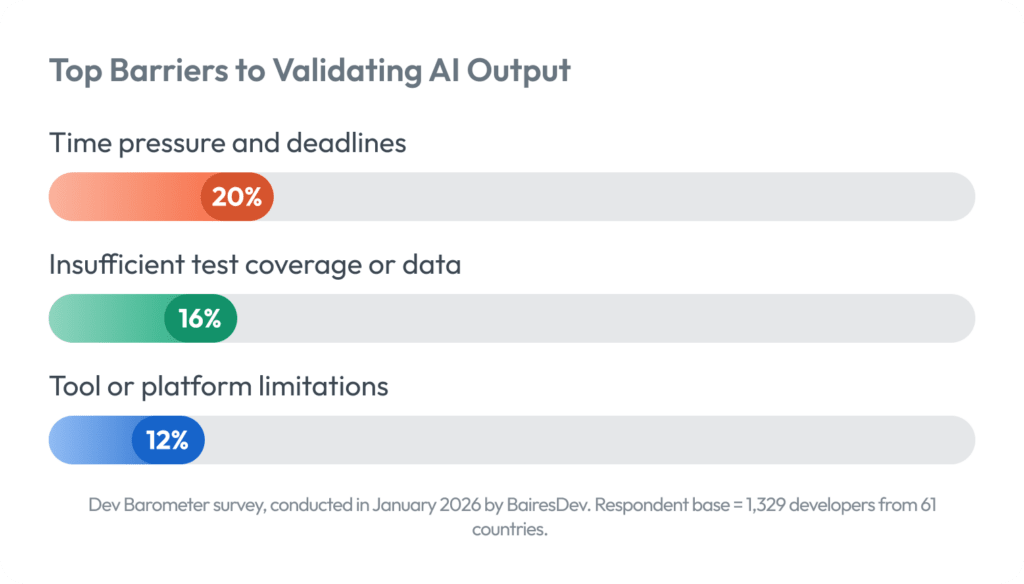

68% Cite Accuracy as the Main Limiter — Time Pressure Tops the Validation Gap

Most respondents (88%) said they feel confident using AI at work and 58% believe its benefits outweigh the overhead. The rising friction point is output verification.

Among respondents who feel uncertain about using AI, 68% cite accuracy or reliability as the primary limiter. The top barrier to validating AI-generated output is delivery pressure, followed by insufficient test coverage or poor-quality data, and limitations in current tools. Among women, lack of clear benchmarks also emerges as a constraint.

That context explains another shift in the data: 56% of engineers identify critical evaluation of AI output as the defining baseline skill for 2026. Engineering work is adjusting accordingly. Routine tasks are increasingly delegated to AI and what remains firmly human is judgment: reviewing outputs, validating logic, and deciding what reaches production.

Software engineer, Bruno Dantas, described reviewing AI-generated code in controlled increments, treating it more like advanced autocomplete than autonomous execution. Automated tests remain standard practice, but he noted that clearer standards and additional training would increase confidence at scale.

As AI continues to reshape workflows, the challenge for 2026 is building the time, standards, and training required to validate output as rigorously as teams now generate it.

Conclusion

The data points in the same direction: AI is expanding what developers can do, but environment still determines who benefits. Access, culture, and organizational support matter more than seniority. Accountability is concentrating at the individual level, and output validation is where delivery pressure is most visible.

For women in tech, these dynamics are especially pronounced. Career agency is growing, but whether that agency translates into long-term advancement depends on the structures around it, like promotion criteria, visibility mechanisms, and team norms. Capability is rising across the board and the next challenge is building the organizational conditions to match it.

With over 4,000 software professionals and 2.5 million technology talent applicants per year, BairesDev sits at the intersection of these shifts as they happen. The Dev Barometer distills what that vantage point reveals each quarter and the next edition will continue tracking how this evolution unfolds.