Scaling engineering is not about doubling headcount and hoping throughput follows. It is about removing constraints, designing independent teams, and only then adding people where they can actually move the needle.

- Distinguishes system, team, and organizational scalability

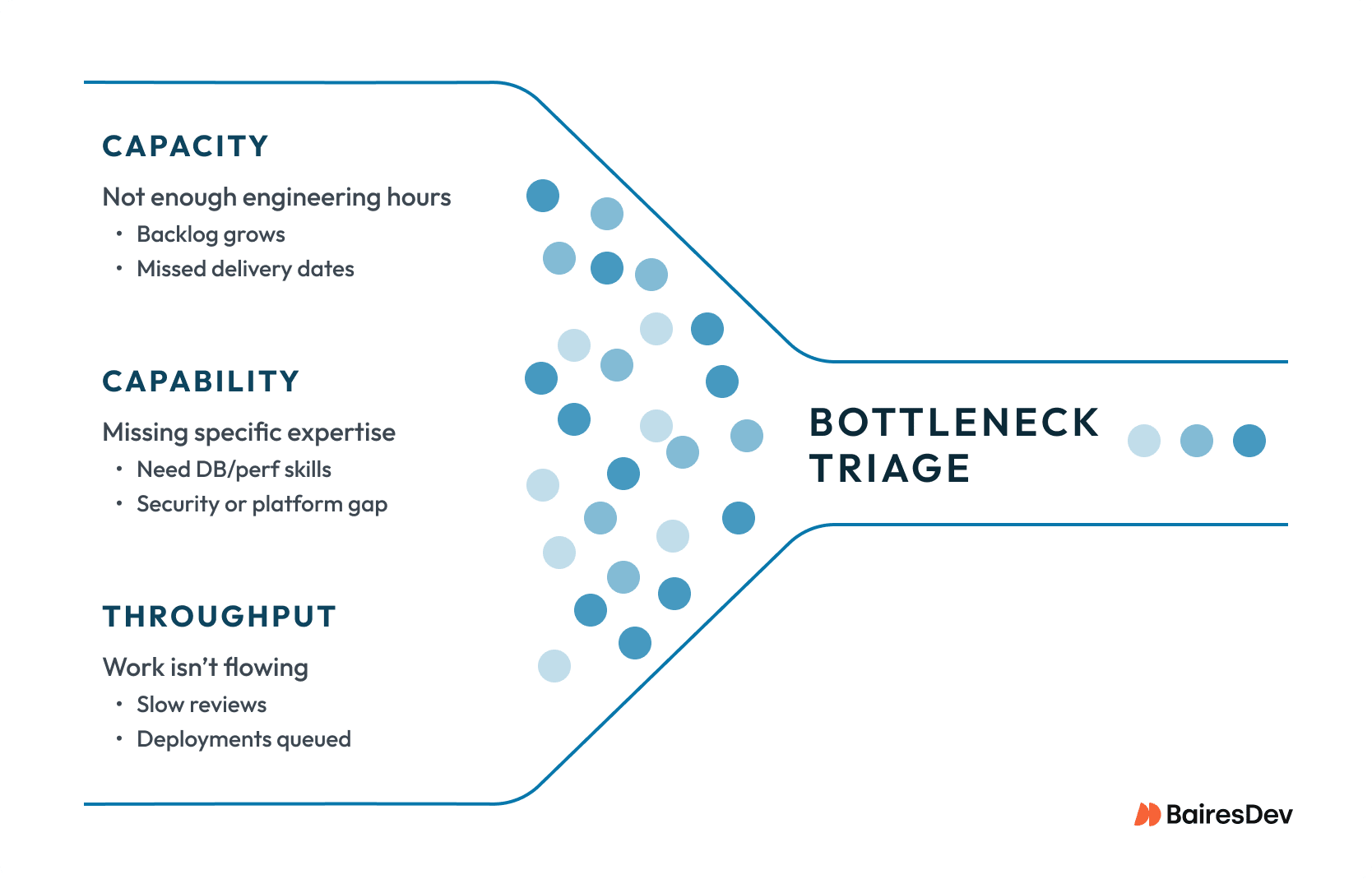

- Separates capacity, capability, and throughput constraints

- Shows how to design small, autonomous pods with clear ownership

- Explains how to integrate nearshore teams as true owners

- Defines guardrails and metrics that prove scaling is working

When The Headcount Myth Meets Reality

You’ve heard the pitch: “We need to ship faster, so let’s double the team.”

More engineers should mean more output. But anyone who’s tried scaling an engineering team knows the math rarely works that way.

Add ten engineers to a thirty-person squad, and you might find yourself shipping slower than before, drowning in coordination overhead.

It isn’t a failure of your engineers. It’s a failure to understand what engineering scalability actually means.

Understanding Engineering Scalability

First, we need clarity on what “scalability” means for an engineering organization. The term operates at three distinct levels, each with different constraints and solutions.

System Scalability

System scalability is what engineers think about first: can the architecture handle increased load? But system scalability also determines how many engineers can productively work on the codebase simultaneously.

A monolith with tight coupling limits parallel development. You might have a hundred brilliant engineers, but if they’re all stepping on each other’s changes and competing for the same deployment pipeline, you’ve hit an architectural ceiling that no hiring will lift.

Team Scalability

Team scalability concerns how effectively a single team functions as it grows. Research consistently shows that teams with more than 7 to 9 members experience non-linear growth in communication overhead.

The math is unforgiving: a five-person team has ten communication channels, a nine-person team has thirty-six, and a fifteen-person team has over a hundred. Each additional channel becomes a potential source of delay, misunderstanding, or coordination failure.

Organizational Scalability

Organizational scalability is where most leaders struggle: how well multiple teams work together, share standards, and maintain a coherent architecture across boundaries. Get this wrong, and you end up with chaos (teams duplicating work and creating integration nightmares) or gridlock (teams perpetually blocked on each other).

The Team Scaling Fallacy

Here’s the uncomfortable truth: engineering productivity isn’t linear with headcount. The relationship looks more like a curve that rises, eventually flattens, and can actually decline. Fred Brooks identified this nearly fifty years ago in The Mythical Man-Month, yet engineering leaders keep rediscovering it the hard way.

I’ve seen this firsthand. A 40-person initiative added 15 engineers, and within months, their cycle time increased by 30%. The quality of new engineers wasn’t the problem. It was the unclear ownership boundaries, the absence of interfaces between teams, and the onboarding overhead during a delivery push that all compounded to create precisely the outcome they were trying to avoid. After carving out clearer ownership and fixing the shared bottlenecks (review SLAs, CI time, release flow), cycle time stabilized and output recovered without needing another hiring wave.

The fallacy manifests predictably. Teams with fuzzy ownership spend longer meetings just figuring out who’s doing what. Code review queues grow from context-switching. Deployment pipelines are bottlenecked as multiple teams ship simultaneously. Nearshore teams get relegated to “task work” because absolute ownership feels too expensive to coordinate.

None of this means you can’t scale engineering teams—you absolutely can. But you can’t do it simply by adding people without addressing the underlying constraints. Adding engineers to a team that lacks clear ownership is like adding cars to a traffic jam.

So, How to Scale an Engineering Team?

Effective scaling isn’t a single decision; it’s a sequence of deliberate moves. Start with diagnosis, proceed through team design, then integrate distributed talent. Skip steps or do them out of order, and you’ll likely feed the dysfunction you’re trying to escape.

Clarify the Bottleneck First

Before making scaling decisions, understand what’s actually limiting throughput. The constraint almost always falls into one of three categories.

Capacity

Sometimes the issue is genuine capacity, and you don’t have enough engineering hours for the work ahead. Lack of capacity is the primary scenario in which adding headcount directly addresses the bottleneck, provided the organizational infrastructure supports rapid integration.

Capability

Other times, the constraint is capability. You’re missing specific skills that generalist hiring won’t solve. Adding mid-level back-end engineers won’t help if your bottleneck is a lack of database expertise. The solution is targeted hiring (the quickest fix) or investing in internal training to build capabilities (the slower, long-game option).

Throughput

The third category, the one we see most often, is throughput. Work isn’t flowing efficiently despite adequate capacity. Code reviews take days. Deployments block on manual approvals. Test suites are so slow that they discourage frequent integration. These are process problems that more people won’t solve, and often even make worse.

Leaders tend to skip diagnosis and jump to hiring, only to discover six months later that their fundamental constraint was a deployment process requiring approval from one overloaded architect. Twenty new engineers, all now waiting in the same queue.

Design Team Pods for Independence

Once you understand your constraints, the goal becomes clear: minimize coordination overhead while maximizing each team’s ability to deliver independently.

On pod sizing, as mentioned before, five to nine engineers is ideal. Below five, you lack resilience, and a single departure creates a crisis. Above nine, coordination overhead eats into delivery. Ownership boundaries should align with architecture: each team pod needs a coherent slice where they can ship changes without cross-pod approval for most work. If your architecture doesn’t support this, address it before scaling teams.

Team pod splitting becomes necessary when the scope exceeds the bounds of coherent ownership. When the team struggles to maintain expertise across their domain, or different areas have different rates of change. Team pod cloning works when you need more capacity for similar work, as cloned team pods inherit the original pod’s practices, accelerating their effectiveness.

Integrate Nearshore Teams as True Partners

Distributed and nearshore teams can be powerful levers for scaling. When integrated correctly.

The standard failure mode is treating them as “task teams” that receive work packages without meaningful ownership. This approach limits their technical decision-making, creates coordination bottlenecks, and demotivates talented engineers who prefer ownership over ticket queues.

Effective integration means giving nearshore teams genuine ownership. They attend the same planning, access the same documentation and stakeholders, and share accountability for outcomes. The only difference should be the location. Not an organizational role.

In practice, two things differ: location and time zone. The first is irrelevant to performance. The second isn’t. Teams that treat nearshore integration as simply adding people to existing workflows usually struggle. The ones that succeed redesign for async-first: written decision trails, documented architecture, overlap hours used for high-bandwidth collaboration rather than status updates. Time zone difference handled deliberately becomes a minor friction.

Of course, to achieve this, it requires investment in communication infrastructure: overlapping hours for synchronous collaboration, documentation that reduces dependence on tribal knowledge, and async norms that don’t leave distributed members waiting hours for answers. Organizations extracting real value from distributed teams treat communication as a first-class engineering concern.

Ask for a stable pod (not rotating individuals) and inquire how replacements preserve context.

Require named technical leadership for the domain (i.e., someone accountable for decisions, not just delivery).

Confirm operating parity: same planning cadence, same definition of done, and ownership that includes on-call or operational responsibility for what they ship.

Onboard Deliberately Across Locations

A poorly onboarded engineer is worse than no engineer at all. They consume productive team members’ attention while contributing little, and often leave within their first year.

Effective onboarding starts before day one: documented architecture, working agreements, and paths to first contribution. Record key meetings so new members can build context asynchronously. Assign onboarding buddies with dedicated time. And here, it is not just permission but allocated hours. Structure knowledge transfer with regular pairing, architecture walkthroughs, and explicit milestones: first commit, first review, first feature.

Establish Quality Guardrails

More engineers means more code and more opportunities for shortcuts that create future problems. Without guardrails, quality erodes under delivery pressure.

Automated quality controls become essential at scale: coverage thresholds, static analysis, performance benchmarks. All that should be enforced in CI/CD, not left to reviewer judgment. Human judgment doesn’t scale, and social pressure to approve only increases as teams grow. Architecture decision records help maintain coherence across teams. Technical debt should be explicitly tracked and allocated capacity, whether through sprint percentages or periodic “tech health” sprints.

Choosing the Right Scaling Move

Different constraints call for different interventions. The table below summarizes when each approach makes sense.

| Scaling Option | When to Use | Key Considerations |

| Process Improvement | Workflow friction is the bottleneck: slow reviews, deployment queues, handoff delays | Lowest cost and fastest impact; always address before adding headcount |

| Re-org / Team Pod Split | Team pods are too large, or ownership is unclear; coordination overhead is dominating | Requires clear domain boundaries; define interfaces before splitting |

| Seniority Mix | Capability gaps in specific areas; insufficient technical leadership | Expensive but high-leverage; target specific gaps, not general seniority |

| Nearshore Pod | Genuine capacity constraint; scalable domain with clear boundaries | Give absolute ownership; invest in communication; avoid task-team trap |

| Direct Hiring | Long-term needs; strategic capabilities worth building internally | Slowest ramp and highest cost, but strongest cultural integration |

Nearshore Readiness Checklist

Before adding a nearshore team, take an honest look at whether you’ve established conditions for success. These questions protect both your investment and the engineers joining your team.

| Area | Readiness Criteria |

| Ownership | Is there a clearly bounded domain this team can own independently? |

| Interfaces | Are APIs and contracts with other teams well-documented and stable? |

| Documentation | Does architecture documentation exist without requiring tribal knowledge? |

| Onboarding | Is there a structured path with clear milestones and dedicated support? |

| Communication | Are async norms and overlap hours already established? |

| Metrics | Do you have baseline metrics to measure the new team’s impact? |

| Leadership | Is there a clear technical lead the team can access for guidance? |

| Quality Gates | Are automated gates in place that don’t rely solely on reviewer judgment? |

How to Measure Engineering Team Success

Scaling without measurement is hoping. You need clear signals that your strategy is working. Or warnings that you’ve added people without adding the capability.

Delivery Metrics

Lead time, from work starting to deployment, should stay stable or improve as you scale.

Throughput won’t scale linearly with headcount. The article’s earlier point about coordination overhead applies here, too. A more realistic target is 0.7 to 0.8x return on added capacity. In complex systems, that’s often considered a strong outcome. If you’re adding twenty percent more engineers and getting five percent more throughput, something is absorbing your investment and it’s worth finding out what.

The DORA research program identifies four key metrics that predict software delivery performance and, in turn, organizational performance: deployment frequency, lead time for changes, change failure rate, and time to restore service.

Quality and Resilience Metrics

A change in failure rates tells you whether guardrails are keeping pace with growth. Mean time to recovery shows how quickly teams respond when things go wrong. Increasing MTTR often signals diffuse ownership. Technical debt trends, measured through static analysis or the percentage of unplanned work, shouldn’t accelerate if your guardrails are working.

Team Health and Retention

Sustainable scaling shows in how engineers feel, not just what they produce. Engagement surveys should track sentiment around ownership and work-life balance. Retention rates are lagging indicators: by the time they drop, you’ve lost months of productivity.

Instead, watch for leading indicators: declining voluntary participation, reduced willingness to stretch assignments, and increased complaints about the process. Onboarding effectiveness, measured by time-to-productivity, reveals whether knowledge transfer scales with the team.

Reading the Signals

No single metric tells the whole story. Effective scaling shows as a pattern: stable lead time, throughput scaling with capacity, steady failure rates, positive sentiment, and strong retention. Improvement in some areas but regression in others warrants investigation. If throughput is up while lead time also increases, you may be starting more work while finishing less of it.

Expert Perspective: Getting Scaling Right In Practice

When leaders ask me how to scale their engineering organization, most of the conversation starts with open roles and budget. Few start with ownership boundaries, deployment pipelines, or review queues. That is usually a sign that the real constraint has not been named yet.

Effective engineering team scaling strategies aren’t about throwing more engineers at problems. They’re about understanding actual constraints, designing teams for independence, integrating distributed talent as genuine partners, and measuring what matters.

The sequence is crucial: honest diagnosis first, then team design around clear ownership, then investment in communication infrastructure, then quality guardrails, then relentless measurement. The payoff is an organization that scales sustainably: where adding capacity genuinely adds capability, distributed teams deliver at co-located levels, and growth strengthens rather than strains your systems.