For most companies, 2024 was the year of the AI pilot. Teams experimented with multiple tools, demos made the rounds in boardrooms, and use cases multiplied faster than anyone could prioritize them. Now the pressure has shifted, going from exploring AI business use cases to proving it can run at the core of how a business actually operates. The gap between those two realities is where most enterprises are stuck.

That gap, and what it takes to close it, was the focus of our recent webinar on building the AI-first enterprise. Greg Boone, CEO of WalkWest and BairesDev Fellow, moderated a conversation with Colin Bodell, CTO of Bazaarvoice, and Mihir Nanavati, GM of Product and Technology at BlueConic, two technology executives with decades of experience navigating the kind of platform shifts that redefine entire industries.

In this article, we’ll unpack their sharpest insights on what AI-first really means in practice, why so many initiatives stall before production, how agentic systems are reshaping enterprise architecture, and why experienced engineers are becoming more valuable, not less.

AI-First Doesn’t Mean AI Everywhere

The most common mistake organizations make when pursuing AI transformation is misunderstanding what transformation actually requires. Companies interpret being AI-first as embedding AI into every product feature, workflow, and corner of the organization. The result is augmentation dressed up as strategy — a lot of activity, little structural change.

Colin, who has led technology organizations at Amazon, Shopify, and Groupon, reframes the question entirely. An AI-first organization doesn’t start by asking where AI can be added. It starts by asking where humans should no longer be doing the work. Downstream, the implications ripple: how teams are structured, where engineers focus, and what leadership is actually accountable for.

Mihir Nanavati brought a product lens to the same insight. Traditional software was deterministic; you wrote the rules, defined the workflow, and the system executed. AI systems introduce probabilistic behavior; they reason through goals rather than follow prescribed paths. “Instead of building systems that execute predefined workflows,” he explains, “you’re building systems that can reason through goals.” That’s more than an upgrade to existing software. It’s a different design paradigm entirely.

What makes this shift hard isn’t technology itself. It’s that most organizations are built around the assumption that systems behave predictably. Their processes, their governance models, and their testing practices assume determinism. Introducing AI means rethinking those assumptions layer by layer. As Colin put it, becoming AI-first is about changing an operating model, and beneath that, a cultural one.

Getting that sequence wrong, reaching for tools before rethinking structure, is why so many AI initiatives produce activity without traction.

Why AI Pilots Die Before Deployment

Most organizations running AI pilots today have something in common: promising results in controlled conditions, and very little in production. The demo works and the business case looks credible. Then, somewhere between experimentation and deployment, momentum dies.

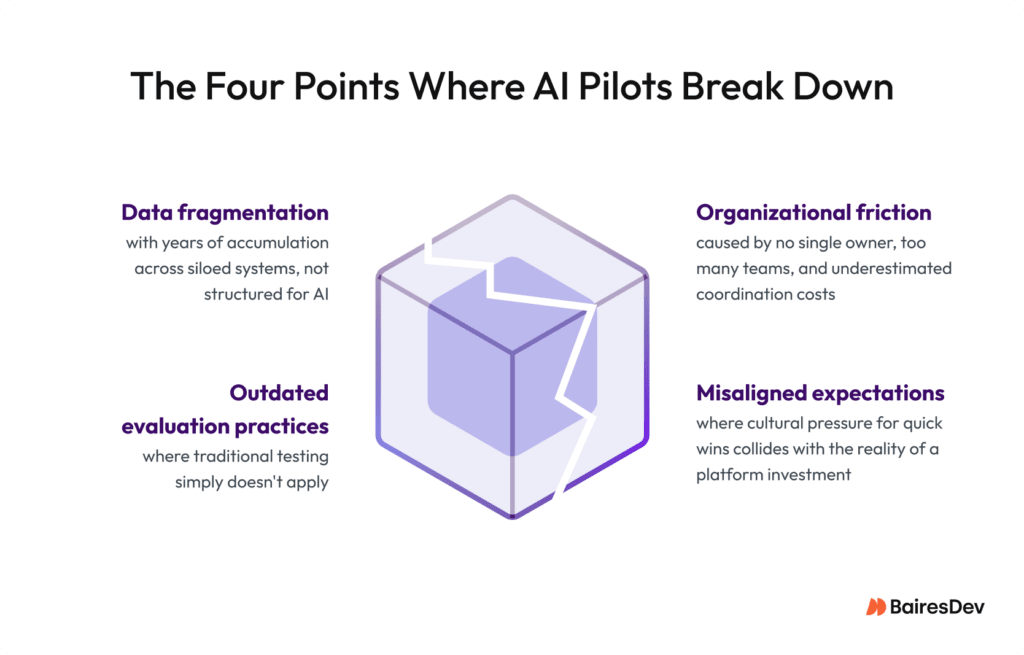

Colin identified three places where it consistently breaks down. The first is data fragmentation. AI systems are extraordinarily sensitive to how it is structured and accessible. Most organizations discover this too late. Years of accumulation across dozens of systems leaves them with something fragmented, inconsistent, and unfit for purpose.

The second is outdated evaluation. Traditional software gives you the same output for the same input, every time. AI doesn’t, which means the testing and validation practices most engineering teams have spent years developing are now insufficient. New disciplines around monitoring, output reliability, and acceptable-failure thresholds have to be built from scratch. The third one is organizational friction. These systems cut across teams and workflows in ways that no single owner controls, and that coordination cost is routinely underestimated.

Mihir added a fourth factor that tends to get less airtime: misaligned expectations. The volume of excitement around generative AI has created a cultural pressure for quick wins that doesn’t match the reality of integrating AI into core workflows. “There’s often a moment,” he observes, “where companies realize this is more of a platform investment than a quick feature launch.” That realization, when it arrives without preparation, stalls projects that were otherwise viable.

The throughline across all four failure modes is the same: AI was treated as a feature, not as infrastructure. Features get scoped, shipped, and measured on a sprint cycle. Infrastructure gets architected. It touches data layers, API design, observability, and organizational accountability at once. Conflating the two doesn’t just slow things down. It sets up the wrong success criteria from the start.

When Software Starts to Reason: Agents, Orchestration, and the Question of Control

There’s a lot of confusion in the market about what agents actually are. Mihir demystified this simply: the difference isn’t about technology itself, but about how software is designed. Traditional automation follows rules defined in advance. Agents operate from goals. You describe what you want to achieve, and the system works out how to get there. That shift makes product teams think less about features and more about capabilities.

What agents are not, Mihir is quick to clarify, is a replacement for existing systems. They orchestrate across them. The databases, APIs, and legacy infrastructure don’t disappear. They become the terrain the agent moves across, which means integration work, not a clean slate. In SaaS, Mihir points out, this shift is already visible. The industry is moving from software-as-a-service to outcomes-as-a-service. The product stops being the software and it becomes the result.

Colin picks up the thread on the governance side, and it’s where his experience running large engineering organizations shows. He breaks it into three layers. The first is technical governance, meaning monitoring and output evaluation. The second is organizational governance, addressing clear ownership and accountability for deployments. And the third is a broader ethical and regulatory dimension as AI systems begin influencing real decisions. His point is that none of these come pre-built, and treating governance as an afterthought is one of the more reliable ways to stall an otherwise viable initiative.

His closing observation is the one worth sitting with. The biggest shift ahead for enterprise leaders is deciding how much autonomy AI systems should hold, and where human judgment has to stay in the room. That’s a leadership question.

AI Doesn’t Replace Engineers. It Raises the Bar for Them.

The public narrative around AI and engineering talent oscillates between two extremes: either AI will replace developers wholesale, or nothing much will change. Colin and Mihir land somewhere more useful for leaders making real workforce decisions right now.

Colin is direct about the productivity gains, mentioning faster code generation, quicker prototyping, and ideas explored in a fraction of the time. But he pushes back on the assumption that this translates into headcount reductions. Historically, when development becomes more efficient, organizations don’t shrink engineering capacity. They build more software. The constraint shifts from engineering capacity to ideas and prioritization.

What changes is the nature of the work. Mihir describes a dynamic engineering leaders are already navigating: developers increasingly act as supervisors of AI-generated output, reviewing and making judgment calls on code they didn’t write line by line. This aligns with the results of the Dev Barometer Q1 2026, reporting that critical evaluation of AI output is the defining skill for developers in 2026.

Colin puts the sharpest point on it. Junior developers can generate code with AI tools. Knowing whether that code is actually good is a skill that takes years to develop and can’t be automated. In an environment where the floor for code generation drops, the ceiling on engineering judgment rises. Experienced engineers become harder to replace.

Building the AI-First Enterprise Is a Leadership Decision

The throughline across this conversation is that organizations struggling with AI aren’t paying enough attention to restructuring how work happens around it. Data foundations skipped, governance delegated as an afterthought, and underestimated coordination costs are leadership failures.

Colin and Mihir bring decades of operating experience across some of the most consequential technology transitions of the last 30 years. Their message is that transformation demands the same rigor and organizational honesty any real transformation requires.

Watch the full webinar, including the audience Q&A where both panelists go deeper on governance, talent strategy, and what leadership teams should prioritize next. And if your organization is working through any of these challenges, our team is ready to help you accelerate your enterprise AI development.