AI chatbots powered by large language models (LLMs) are arguably the most disruptive technology to launch this decade. Ever since OpenAI launched ChatGPT as a free solution at the tail end of 2022, some of the leading names in tech have been racing to develop and refine AI models and tools of their own—fueling demand for AI development services and chatbot development services across industries.

But which of these solutions is the best AI assistant on the market today? How do they stack up in different use cases? This AI chatbot comparison examines 5 leading AI chatbots available today and examines how they perform in specific productivity-oriented tasks. In particular, we’ve looked at which of these tools offer the smartest functionality for software developers and modern ERP development services environments.

AI Chatbot Comparison

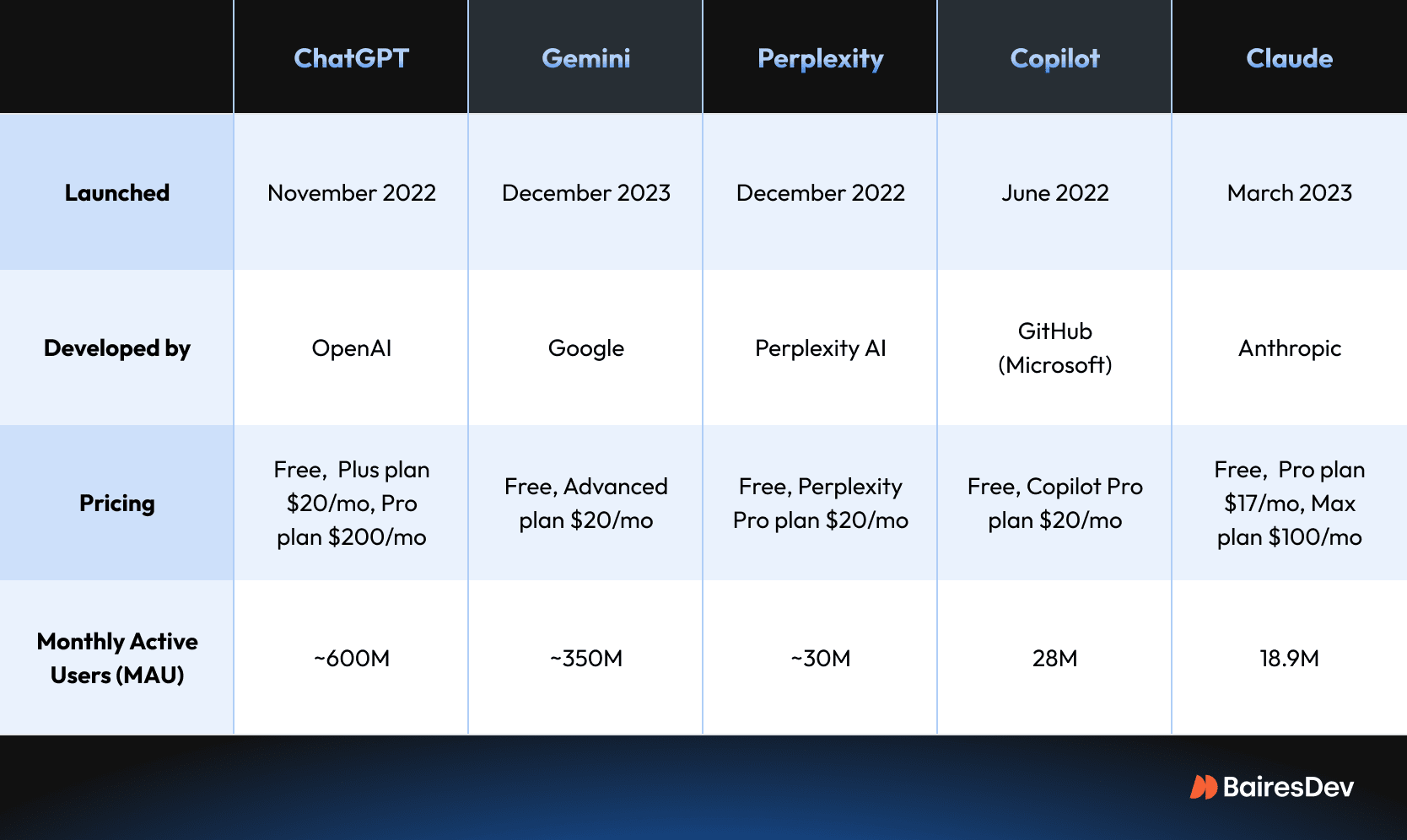

Tens of different AI chatbot tools are now on the market, with hundreds of other productivity tools being upgraded with new AI features and integrations. To try and cut through the noise, we’ve honed in on the 5 biggest LLMs for this AI chatbot comparison: OpenAI ChatGPT, Google Gemini, Perplexity, GitHub Copilot, and Anthropic’s Claude.

ChatGPT

ChatGPT’s influence in the AI space is so ubiquitous that many people simply refer to AI and LLMs as “ChatGPT”. Since its launch in late 2022, ChatGPT has been the leading AI chatbot on the market, and its popularity has prompted tech giants like Microsoft and Google to unveil their own chatbots.

GPT stands for Generative Pre-Trained Transformer, whilst Chat refers to the conversational nature of the tool. In keeping with its name, ChatGPT focuses on providing a conversational, algorithmically generated chatbot experience that can answer questions, support research, analyze data, and generate content.

Its current model is called ChatGPT-4.5, with previous iterations like GPT-4 and GPT-4o remaining active at the time of writing. It also recently launched improved image generation functionality in GPT-4o, which was met with mixed responses due to ethical concerns.

Gemini

Not to be outdone, Google launched its own AI chatbot five months after ChatGPT. Gemini benefits from being a part of Google’s suite of solutions, meaning it can be easily integrated into Google apps and other Google products. It used to be known as Google Bard, and its launch was marred by highly publicized failures.

Much like ChatGPT, Gemini is designed to provide algorithmic responses to questions based on a large language model. Gemini’s history actually dates back to 2021, with the tool being announced long before ChatGPT became a household name.

Gemini hasn’t gone through as many upgrades as ChatGPT. The latest model at the time of writing is Gemini 2.5.

Perplexity

Perplexity launched their AI chatbot very soon after ChatGPT, in December of 2022. They haven’t received as much growth as OpenAI has in that time, with significantly fewer estimated users. However, Perplexity remains one of the leading AI options and has been praised as an alternative to bigger tools for specific tasks, namely research.

Perplexity focuses on accuracy, offering more reliable answers to search questions than some of its competitors. It’s also worth noting that Perplexity has access to some other popular models owned by OpenAI, Deepseek, Claude, and Gemini, which makes it the best chatbot for comparing search results and enhancing web search between tools.

Unlike other tools, Perplexity’s free version and Perplexity Pro haven’t gone through many iterations. Instead, developers are working to refine the existing tool.

Copilot

Microsoft launched their own chatbot around the same time as Google, integrating it into various mobile apps. Much like Google’s alternative, Copilot integrates directly with Microsoft products. Copilot is so intrinsically linked with Microsoft 365 that it was previously known as Copilot 365, until Microsoft rebranded it in 2023.

As a result, and again much like with Gemini, Microsoft Copilot is easily accessible for most people working today. It’s also built into both Microsoft Edge and Microsoft Bing.

Microsoft hasn’t launched vastly upgraded versions of Copilot since its launch. Instead, like with Perplexity, they’ve worked on upgrading the existing tool.

Claude

Anthropic is one of OpenAI’s most direct competitors. Claude was launched in March 2023 and quickly became known for its human-like interaction. Though not as widely used as ChatGPT, many praise Claude for its more eloquent, less robotic answers to queries.

In 2024, Anthropic launched the Claude 3 family version of their tools, which includes Haiku (optimized for speed), Sonnet (which balances performance and capability), and Opus (which is designed for more complex reasoning).

Alternative AI Chatbots

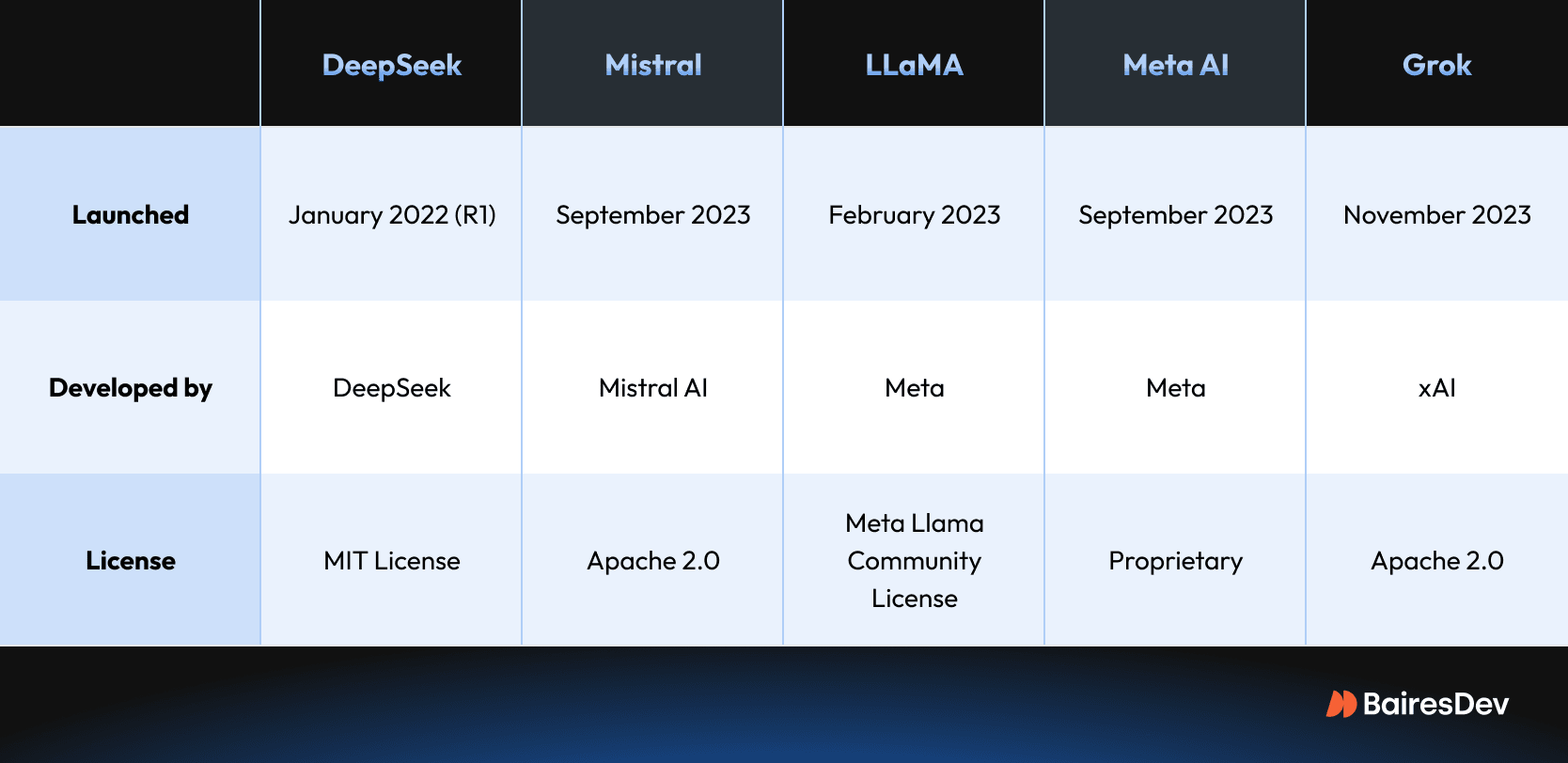

Though these represent the five biggest AI chatbots at the moment, particularly in the West, several other tools are worth keeping an eye on. The most significant of these is DeepSeek, China’s most advanced chatbot, which launched in January 2025. DeepSeek will likely be used extensively throughout China, though ongoing US-China relations make its future in the West uncertain.

Open-source chatbot options also exist, but these often lack some of the key features possessed by the tools above. However, some open-source LLMs such as Mistral and LLaMA are increasingly popular and show great promise.

Other notable chatbots include Meta AI and X’s Grok. However, these tools are mostly used across the social media platforms they’re connected with, and not for productivity-oriented tasks.

AI’s Capabilities and Limitations

Before comparing these tools directly, it’s worth explaining what all of them have in common in terms of their capabilities and their limitations. All of today’s LLMs are best used as a supplementary research tool.

They’re most effective when you’re able to prompt clearly, and when you consistently iterate on any responses that they provide. None of these tools is currently capable of superceding human intuition, be that to handle extensive research, write in a creative and engaging way, or create unique visual works of art. It’s also unclear whether they will be genuinely able to reach this capacity in the future.

If you’re using AI chatbots as a developer, they’re most useful as a learning aide. They can help explain code snippets, provide examples, explain paradigms and concepts, and help ensure you are using best practices. They can be preferable to search engines in certain situations, particularly if you have a specific question that needs addressing.

As a general rule, if you choose to use these tools, then make sure to work with them. Don’t expect them to do your work for you.

Which AI Assistant Delivers the Best Results for Developers?

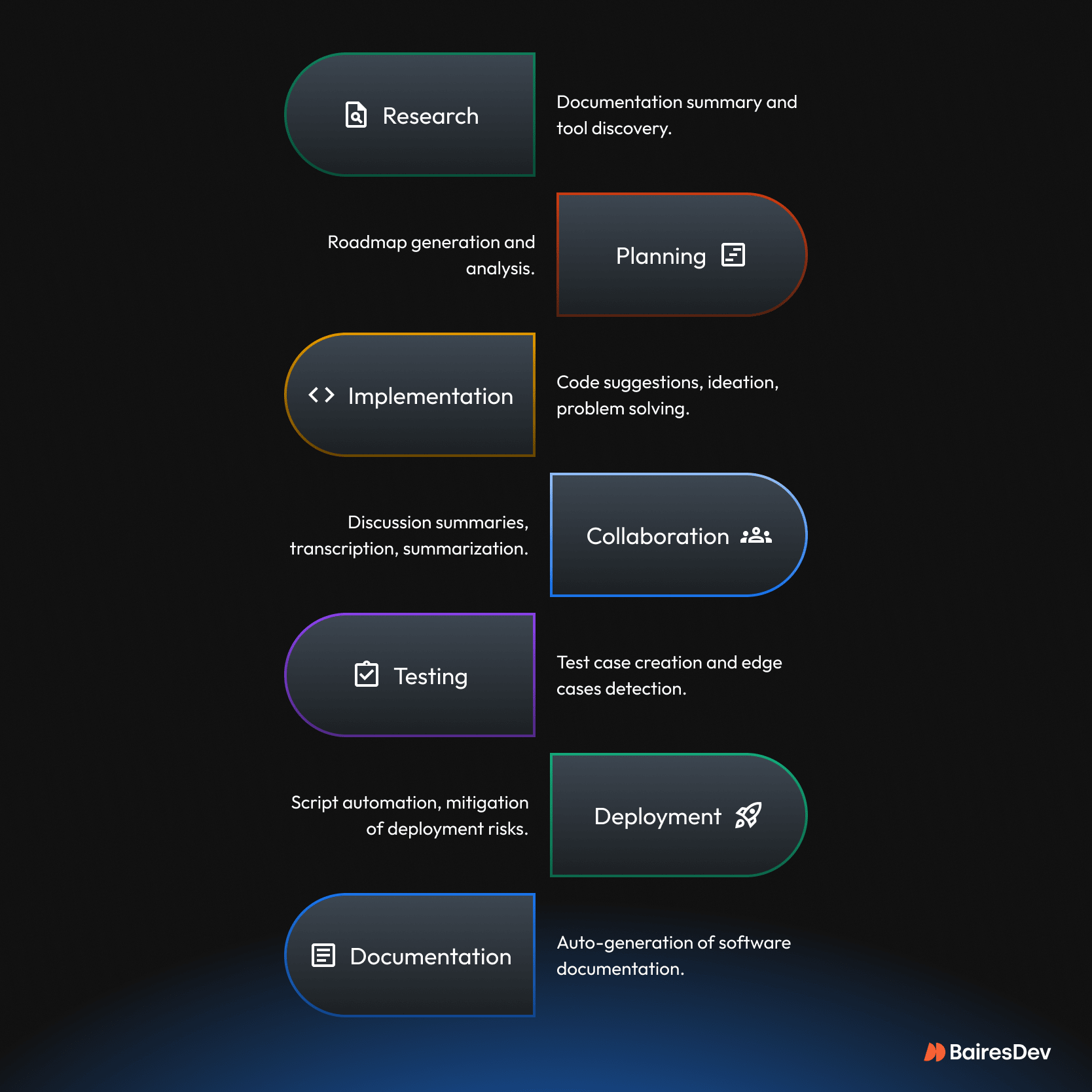

Software development is not just about writing code. It’s an increasingly complex process involving research, planning, implementation, and collaboration. We should not overlook testing, deployment, and documentation. AI can help streamline many essential, non-coding tasks, allowing engineers to focus on strategic and creative decisions instead.

We’ve tested each of these 5 leading AI chatbots (and a few more), and here are our recommendations for which performs best in different development-focused use-cases.

Which AI Models and Plans Should Professionals Choose?

If you’re hoping to use an AI chatbot and its model intelligence to help you code, it’s worth investing in a paid solution. Some engineers even opt for the most expensive plans, such as ChatGPT Pro.

Free versions can be serviceable for code generation, but they likely won’t be able to write or rewrite scripts to a level that is reliable or accurate enough to be used in a real solution. However, free users can still get a lot of mileage:

- Chat-GPT’s 4o model offers fairly reliable coding responses at the time of writing. If you are not willing to pay for a premium plan, ChatGPT is arguably the best all-rounder.

- Perplexity gives you access to multiple AI models, and this can be extremely beneficial in some scenarios. However, Perplexity Pro is a superior option for coding.

- Google Gemini 2.5 represents a substantial improvement over its predecessor and it can match or even surpass GPT in some cases, but free access if fairly limited.

- Copilot has seen substantial improvements in recent months. The free version offers a lot of functionality and performs well.

- Claude can also function as a code interpreter, generating small snippets of usable code in certain situations.

In all cases, you should only use these tools to generate small chunks of code, as none of them can reliably code entire solutions or code that deals with complex business logic. They can help, but they still rely on human intuition and decision-making.

AI Teaching Tools and Learning Aids

Creating code is one thing, but using an AI chatbot as a learning aide and a research tool that replaces or augments traditional web searches is entirely different.

This use case also plays to more LLM strengths, as you’re less reliant on unique snippets of code working; instead, you’ll be using these tools to learn general coding rules and techniques.

If a small team is taking on a new project that requires junior members to quickly master a new framework or tool, AI can help them upskill. This reduces the amount of time seniors will need to invest in their training. Likewise, AI can help with the onboarding process and knowledge management.

All of the tools listed in the above section will also help professionals master new skills and flatten the learning curve. The free versions are also usable, though you may need to dedicate more time to cross-checking any suggestions for accuracy.

Using AI for Research and Analysis

Using an AI tool for data analysis and research can help with plotting out a development plan, considering new features, workflows, and more. Research can also assist other professionals in their careers, like helping project managers better organize projects, or helping marketers strategize and ideate.

Perplexity also has specific research tool functionality for academics, and its focus on reliable results can make it an even better choice than ChatGPT and Gemini when it comes to certain types of research. It also grants you access to several different LLMs, so you can cross-check results across different models when using Perplexity in lieu of a search engine.

ChatGPT’s deep research agentic capability was introduced in early 2025, making GPT a powerhouse for deep dives. Perplexity still has an edge for time-sensitive topics, e.g., current events, but GPT’s deep research shines when it comes to in-depth searches. The only downside is that access for free users is restricted, and even Plus users are capped at 30 deep searches a month.

Thanks to Google’s leading experience in search functionality across other Google apps and its namesake search engine, Gemini is a good choice for AI-driven research, especially after the 2.5 update.

Best AI Chatbots for Writing and Marketing

Many professionals have experimented with using AI chatbots for writing tasks, such as email writing, internal documentation, creation of training materials, generation of scripts for YouTube videos, and outlines for content creation in general.

If you want to use an LLM’s answers for their written eloquence, then Claude Pro is likely your best option compared to other chatbots. Claude focuses on the quality of its written responses, often providing human-like interaction, which can make it a better tool than ChatGPT or the other alternatives listed above.

However, much like with coding, none of these tools will generate emails or write articles that engage people as effectively as something you’ve written yourself. While this might not matter as much for internal documents, marketing and comms departments should not rely on AI to generate client-facing content from start to finish.

ChatGPT is arguably the best overall choice for visual marketing, after updates that have improved its AI image generation capabilities. These are best used for internal purposes rather than in outbound marketing strategies.

Just as engineering teams cannot push AI-generated code into production, and the same goes for marketing teams. AI can and should be used to boost productivity internally—to create charts, mockups, outlines, and so on.

AI Chatbots for Translation

AI chatbots are extremely good at translation, particularly if you upload documents to the tools directly. This can be particularly useful if you’re communicating across global teams, conducting market research, or localizing products and services.

ChatGPT’s more recent models tend to be quite good at translation. However, you should limit any AI agent translations to brief paragraphs or short messages. If you have longer documents—for example, a technical manual or whitepaper—you’ll get better results using dedicated translation tools like DeepL or Google Translate.

If you’re translating official documents or for marketing overseas, it’s worth investing in a translator rather than using a free AI chatbot. All client-facing output should be checked by a professional!

Integration With Google and Microsoft Productivity Suites

Like it or not, AI is already an integral part of industry-standard productivity suites. Microsoft has integrated Copilot into its applications, including Word, Excel, and Outlooks. Google has done the same with Gemini and Google apps such as Gmail, Docs, and Sheets.

Big tech companies have moats for a reason. By virtue of their popularity, Microsoft and Google have practically ensured that Gemini and Copilot will be embraced by many businesses.

Apple Intelligence is also worth mentioning, though it currently seems to be focused on enhancing user experience on Apple’s consumer devices. It will undoubtedly evolve to offer more productivity features for macOS, the platform of choice for many developers and designers.

Other noteworthy examples include Adobe Firefly, Figma’s new AI tools, Zapier AI integrations, and Notion AI. The list of companies integrating AI into existing products with large professional user bases is extensive. This trend is here to stay.

ChatGPT and Specialized AI Tools

So, where does that leave generalist chatbots like ChatGPT and more specialized AI tools? Where do they fit in?

The answer will likely depend on the tech literacy and makeup of your team. ChatGPT is arguably the best overall AI chatbot and its latest models have the widest functionality for productivity, even if you stick to the free plan. It’s also one of the easiest tools to get to grips with, which can make it a reliable choice for more general teams. Thanks to its popularity, many users are already familiar with it.

Perplexity’s more advanced functionality may be preferable for more tech-savvy teams, depending on your use case. Some teams may also prefer Claude because of its less robotic responses, e.g. it is a good choice for marketing and communications professionals.

When it comes to specialized tools, making specific recommendations becomes difficult, if not downright impossible. There are too many new solutions out there and every week sees the introduction of a new AI tool or SaaS. Many successful AI tools are designed for tight niches, so they don’t have millions of users or name recognition beyond their industries. You have to research your niche on your own, or use ChatGPT’s deep research to find genuinely useful tools for your team.