Remember the pandemic? In March 2020, engineering organizations learned the same lesson at the same time: plans that look great on paper can collapse overnight. Roadmaps were abandoned and teams were forced to ship under conditions no one had modeled for. Some time later, the end of the zero-interest-rate era came next, and today we are literally flooded by mass AI adoption.

So I guess before today, there was in fact a real temptation to adopt the latest software development trends and modernize everything under the ceiling tiles. For engineering leaders, that framing creates massive risk. The real danger isn’t just falling behind or missing out, but spreading your attention so thin that you can’t execute on anything.

The holy grail we’re all looking for is risk-reduction, not cargo-cult engineering. You need to know what choices will change your delivery profile in the near term vs. the ones you should keep an eye on.

2026 Priorities vs. Watchlist

This table shows the trends that need leadership action in the next two to three quarters and the ones that should stay in monitoring mode. If a trend is not in this Priority table, it shouldn’t eat up senior engineering attention. Not this cycle.

Priorities

| Trend | Why Now | First Action | Risk If Ignored |

| AI-Assisted Development | Already in daily workflows, unmanaged use creates variance and security exposure | Define permitted uses, enforce review gates | Shadow AI, inconsistent quality, higher incidents |

| Secure Delivery | Breaches now start in pipelines and dependencies, not app code | Assign ownership for pipeline security and dependencies | Late-stage security failures, compliance incidents |

| Cloud-Native Reliability | Over-fragmented architectures drive instability and overhead | Reevaluate service boundaries using failure and latency data | More incidents, slower recovery |

| CI/CD Maturity | Manual steps cap throughput and increase errors at scale | Remove top manual bottlenecks | Slower releases, brittle delivery under pressure |

| Cost Governance | Cloud spend and tooling sprawl now face executive scrutiny | Tie architecture choices to cost and utilization metrics | Budget cuts imposed without technical input |

| Low-Code Oversight | Business teams ship logic outside engineering visibility | Establish guardrails and review paths | Compliance and reliability failures you don’t control |

Watchlist (Monitor, Do Not Lead With)

| Trend | Why Not Yet | What to Do | Risk of Early Investment |

| Quantum | Impact still years out | Track standards and crypto implications | Spend without delivery benefit |

| 5G/Edge | Only matters for latency-sensitive or hardware-linked cases | Monitor industry adoption | Unnecessary complexity |

| AR/VR | Business value still narrow | Limit to scoped pilots | Chasing novelty without ROI |

| Blockchain | Costs still outweigh benefits | Constrain to proven cases | High cost, limited reliability gains |

Most “Top Trends” Lists Are Misleading

Most “Top X trends” lists optimize for completeness and novelty, not for how mature engineering organizations actually manage work. They often encourage teams to spread attention too thin, and in many cases they cover trends more suited to greenfield projects.

Our list is prioritized for delivery impact and risk, not novelty. Each trend is ranked based on how directly it affects reliability, security, cost, and execution in complex environments, not plucky startups that can change direction on a dime.

Read each entry as a decision aid: first, what the trend actually is in practice; second, why it changes your risk profile; and third, the one concrete action you can assign to move forward without overcommitting.

AI-Assisted Coding Tools

In 2025, 84% of developers reported using or planning to use AI tools. (Compare that to 88% of all organizations using AI.) So, AI is already a part of the development process. It’s embedded in how software developers write code, generate tests, debug incidents, and so on. AI now changes delivery dynamics whether you sanction it or not. But if you do nothing, you just get the downsides.

What AI-Assisted Development Actually Looks Like Now

AI touches far more than feature code. Engineers use it to scaffold services, refactor legacy logic, generate tests, write deployment configs, and investigate production issues while writing code. A lot of this happens informally, driven by local productivity gains.

For example, a developer can generate a large volume of working code quickly, but that code still enters the same review, testing, and release system. If those systems assume slower, human-authored output, review queues lengthen. You feel this as “we are shipping more, but confidence is down,” even if individual contributors feel faster.

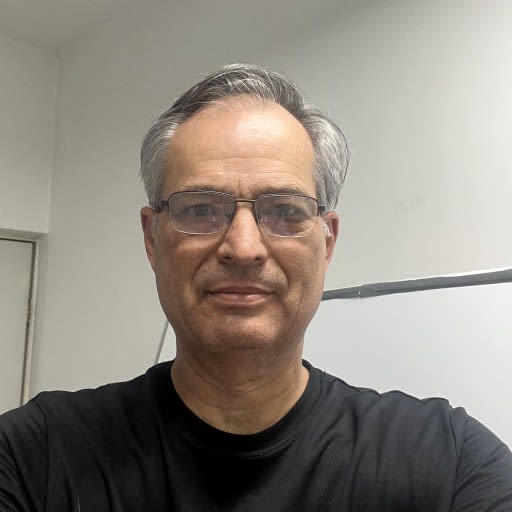

Why AI Changes Failure Modes, Not Just Velocity

A common assumption is that AI merely accelerates existing workflows. In reality, it shifts where failures occur. Instead of obvious bugs, you see repeated patterns or insecure defaults replicated across services at scale by generative AI.

AI-generated scaffolding can propagate the same flawed assumption into multiple repos within days. Without stronger validation, AI can repeat the same mistake at scale before humans notice.

What you can do differently now is treat AI as a system input, not a personal tool. Decide where AI-generated output is acceptable, raise the bar on automated testing and review where volume increases, and watch delivery variance. The uncomfortable truth is that AI does not remove engineering judgment. It concentrates it at the leadership and platform level.

Secure Delivery Shifts Left and Up

Secure delivery no longer lives at the end of the development lifecycle, no matter what your org chart says. Risk has moved into pipelines, shared platforms, and governance decisions that shape how fast and safely code reaches production. If you still treat security as a review step, you are reacting after exposure has already scaled.

What Secure-by-Default Delivery Means Now

Secure delivery means the safest path is the easiest path. Controls live in CI/CD and platforms, the paved road Gene Kim has been arguing for all along. Every change flows through the same guardrails by default.

For example, if engineers can deploy through approved pipelines with built-in checks faster than they can bypass them, security becomes a delivery accelerator. If bypassing is easier, risk will accumulate silently until an incident forces intervention.

Why Security Is Moving Into Pipelines and Platforms

Modern failures rarely originate in handwritten business logic in large software systems. They enter through compromised dependencies or misconfigured infrastructure. These risks scale faster than human review as release cadence increases.

As an illustration, a shared build pipeline with weak access controls can become a single point of compromise across dozens of teams. Fixing that centrally reduces risk far more effectively than asking every team to “be more careful.”

What Leaders Should Prioritize First

Your highest-leverage moves are structural, not procedural. Focus on the systems that move and validate code.

Priorities to operationalize include:

- Treating CI/CD credentials and secrets as production assets with clear ownership.

- Automating dependency and artifact controls inside the pipeline.

- Standardizing secure delivery paths so teams do not invent their own under pressure.

The assumption to challenge is that security slows delivery. In enterprise environments, unclear security is what actually causes delays. When guardrails are built into platforms, teams move faster with fewer surprises.

Delivery Infrastructure: Architecture, Platforms, and Pipelines

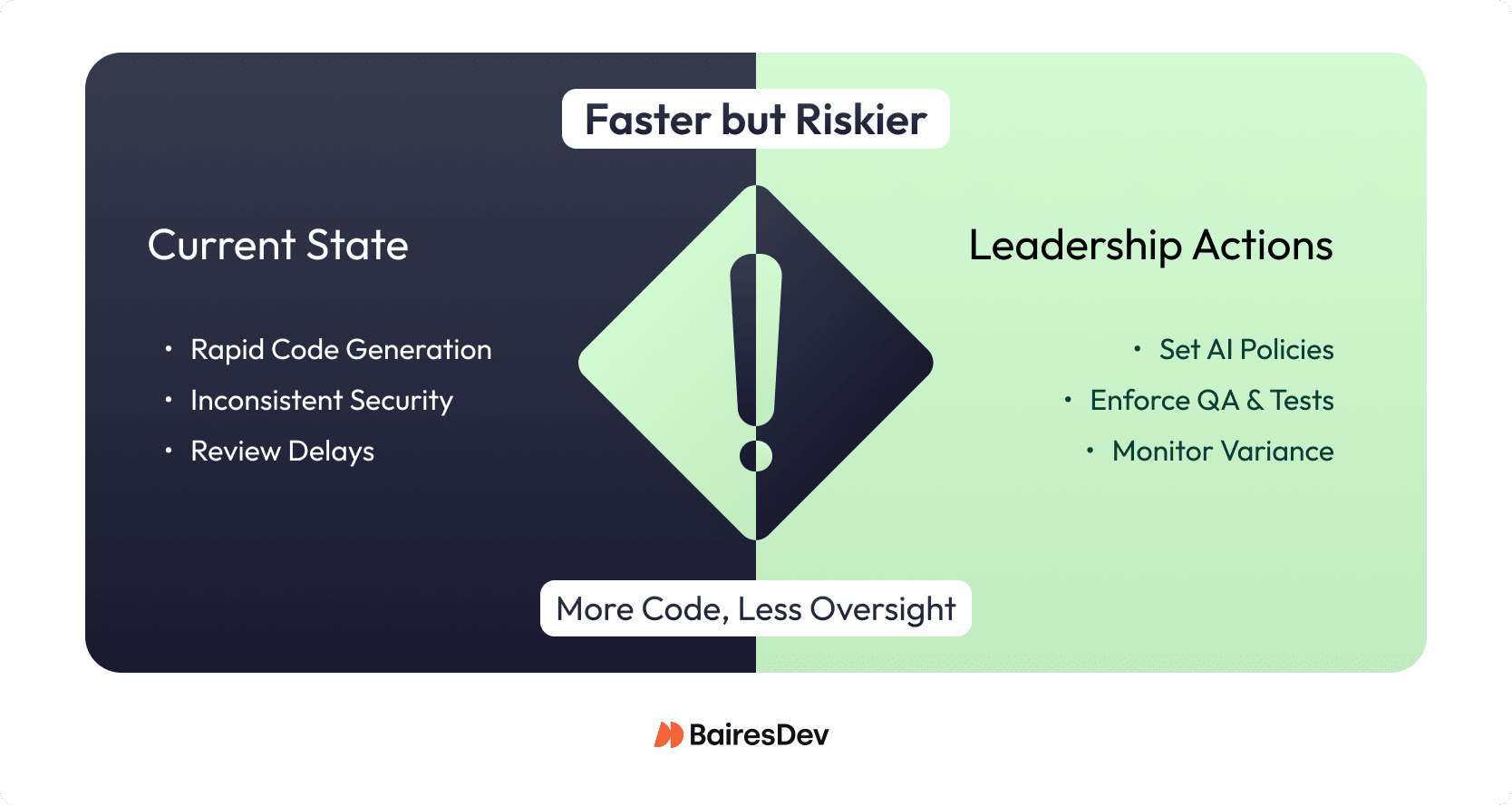

The most damaging failures in large engineering orgs don’t come from slow feature teams. They come from shared infrastructure: deployment systems, observability gaps, over-fragmented services, manual steps that surface at the worst possible moment.

Three problems tend to compound here, and you need to get ahead of them:

Architecture choices that over-optimize for the org chart

Teams split services to match team boundaries, not failure domains. You end up with five microservices where a modular monolith would’ve been calmer to operate. The question isn’t “is this cloud-native?” It’s “can we run this without drama?” Conway’s Law cuts both ways.

Platforms that never got finished

Deployment and rollback paths vary by team. Observability is inconsistent. Recovery depends on who’s on call. When these basics aren’t standardized, small incidents cascade. A fragile deploy system can halt dozens of services at once, regardless of how fast your teams ship.

Manual steps that exist because automation was never prioritized

Approvals in Slack, one-off scripts, clunky handoffs that work fine until someone’s on vacation. Each exception adds cost through rework, delays, and audit friction. And they have a habit of surfacing when you can least afford them.

The leadership move here is unglamorous: audit the paths code takes from commit to production, find where variance and manual intervention live, and fix the infrastructure before adding new capabilities on top of it. Reliability is what makes speed sustainable. The reverse isn’t true.

Engineering Cost Pressure Becomes Explicit

If you cannot explain why your cost profile makes sense, someone else will simplify it for you. Leaders who connect spend to delivery outcomes keep control. Those who cannot inherit blunt budget cuts.

Engineering cost pressure is no longer implicit or deferred to finance. Operational costs and efficiency mandates directly shape tooling choices, staffing models, and architectural decisions. Leaders are expected to explain not just what systems do, but why their cost profile makes sense under sustained scrutiny.

Hidden cost growth usually comes from decisions that feel locally reasonable but scale poorly. For example, adding tools to solve isolated problems can fragment workflows and duplicate spend. Similarly, architectural choices that optimize for peak flexibility often raise baseline operating costs through higher cloud usage and on-call load.

What you need to reassess now is where cost is structural versus incidental. Look closely at platform sprawl, underutilized services, and teams maintaining bespoke solutions that could be standardized. Tie architectural and staffing decisions to measurable outcomes like utilization, incident cost, and delivery throughput. Cost control doesn’t have to slow innovation. Unexamined cost growth eventually forces blunt cuts that damage both morale and delivery.

Governed Low-Code and No-Code

Low-code and no-code tools are no longer experimental. Finance teams are building approval workflows in Power Apps. operations is stitching systems together with Zapier. Meanwhile, HR is running internal processes on AppSheet. These systems increasingly touch real production data and business outcomes, but they often live outside standard engineering ownership and review.

That’s where the risk shows up. When a low-code workflow breaks, there’s often no clear owner or test coverage. Monitoring that explains what failed or why is almost nonexistent. Security and compliance controls are frequently weaker than in core systems. In practice, you’ll likely see shared accounts and broad permissions with little or no auditability. Over time, these tools also create operational fragility. A single power user becomes the de facto maintainer, and when that person leaves or the business changes, the workflow becomes untouchable. None of this is theoretical. These systems already sit on critical paths.

The right move isn’t to ban low-code but to govern it. Start by inventorying business-critical low-code assets, defining basic guardrails around data access and environments, and creating a lightweight review and escalation path for workflows that affect revenue, compliance, or customer experience. The goal is visibility and ownership, not bureaucracy.

Trends Not Worth Leading With

Some emerging technologies are strategically relevant but operationally premature for most enterprises. Quantum computing and advanced 5G use cases carry high uncertainty and low near-term delivery impact. They rarely change reliability, cost, or execution risk this year. Track them through standards progress, regulatory shifts, or proven industry adoption, and reassess only when they begin to constrain security, latency, or compliance in your core systems.

Next 90 Days: Checklist

Pick 2–3 items. Don’t try to do all of them at once.

- AI-assisted development: Publish a one-page AI usage policy and have reviewers tag AI-heavy PRs for a quarter.

- Secure delivery: Name an owner for CI/CD security and review who controls pipelines, secrets, and dependency rules.

- Cloud-native reliability: Identify the top 2–3 “noisy” services (incidents, MTTR) and decide whether to merge or simplify boundaries.

- CI/CD and automation: Ask each key team to list manual steps from commit to prod; automate at least one high-friction approval or script.

- Cost and low-code governance: Review spend and tooling for your top platforms with finance, and inventory business-critical low-code workflows touching revenue or compliance.