Executive summary

This article argues that the gap between teams seeing 10x productivity gains from AI and those seeing marginal returns comes down to codebase quality, not tooling. It outlines the four characteristics of AI-ready code, explains how spec-first development works in practice, and makes the economic case for treating engineering rigor as infrastructure rather than overhead.

Two statistics about AI in software development seem impossible to reconcile.

The first: most organizations see no measurable ROI from their AI coding tools. At best, developers see modest returns or uneven results. At worst, AI tools are generating defects at an unprecedented rate, and your best engineers feel like their job has been reduced to reviewing AI-written code.

The second: a small percentage of teams report productivity gains of 10x or more. Features shipping overnight that would have taken days or months. These teams aren’t sacrificing quality for speed. They’re maintaining it, and in many cases improving it.

Both statistics are accurate. The difference between them is the codebases they’re operating on.

The Old Economics of Software Engineering

For decades, we operated under an economic model that favored trade-offs on quality. Quality was expensive and expensive meant slow. Writing comprehensive test suites doubled initial development time. Maintaining thorough documentation required ongoing effort that didn’t ship features. Enforcing strict modularity constrained architectural choices and slowed early iterations. Every investment in rigor was a direct tax on delivery speed.

That created a predictable incentive structure. With the fully loaded hourly cost of a human engineer, every hour spent on discipline was an hour not spent on delivery. The tedious infrastructural work of testing, documentation, and refactoring was exactly the work your best engineers didn’t want to do. Management wanted to spend time on the obviously productive work of shipping features and engineers gravitated toward work that was more interesting and challenging. No one was fighting for the investment in quality.

Technical debt became a tool. Taking on debt to ship faster and build what the market needed now rather than what the architecture demanded was a rational decision. Every engineering leader has made these tradeoffs. “We’ll refactor later” was optimization under constraints.

The best teams actively managed their portfolio of tech debt and took it on deliberately, paying it down strategically. But nearly every engineering team I’ve worked with had a mountain of debt their leaders looked at and thought: if only. The investment never materialized under constant pressure to ship.

How AI Has Inverted the Economics of Code Quality

AI has inverted the economics of code quality on both sides of the equation: the return on engineering rigor has increased dramatically, while the cost of achieving it has dropped just as sharply.

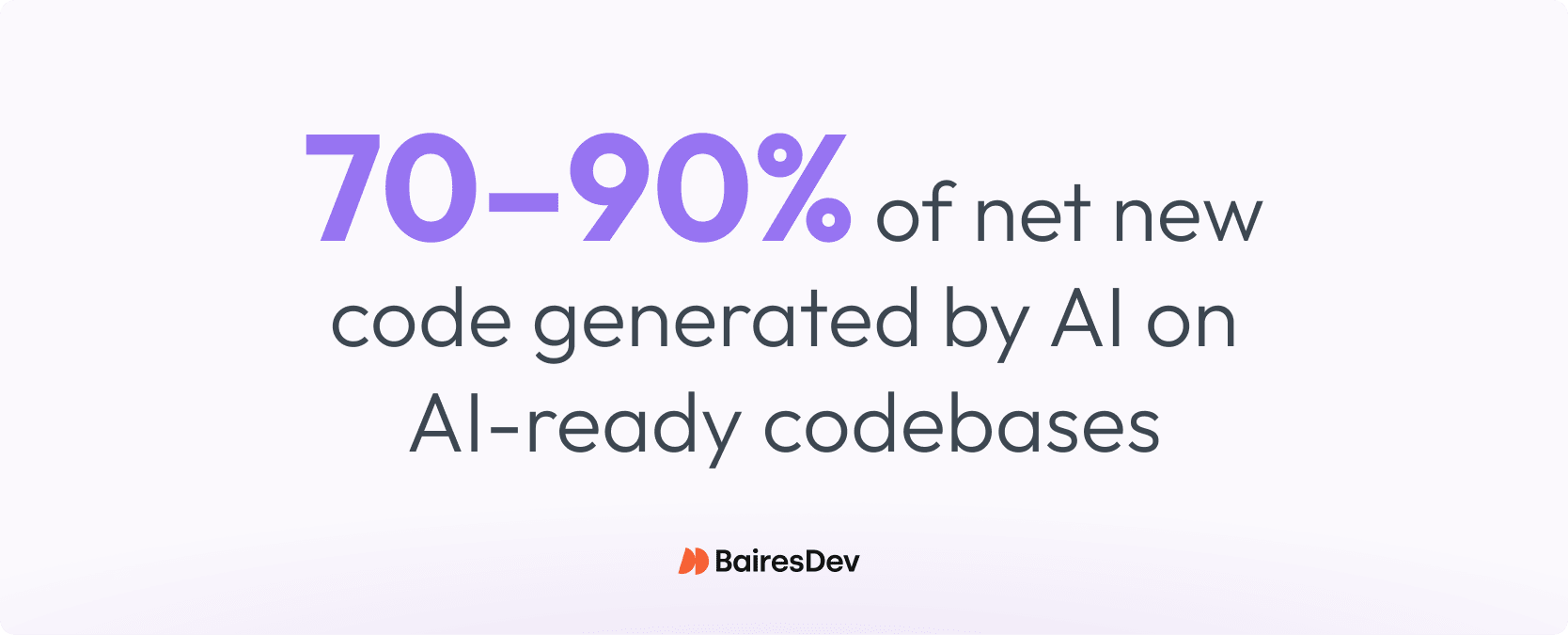

The return on discipline has exploded. When your codebase is structured for AI to work with effectively, AI can generate the majority of your code reliably at scale. Based on my experience, teams working on AI-ready codebases consistently see AI generating 70-90% of net new code, not just on toy examples but on production features in codebases with real complexity. A clean codebase is no longer a nice-to-have. It’s a force multiplier that changes what a small team can accomplish and the advantage compounds. Each cycle of clean code makes AI more effective, which produces better code and consequentially makes the next cycle faster.

The other side of the equation has changed just as much. The work that makes a codebase AI-ready — refactoring, writing tests, documenting interfaces, enforcing consistency, reducing duplication — is exactly the kind of work AI handles well. Writing a comprehensive test suite for a module used to cost as much or more as building the module itself. Now AI can generate that suite in an almost fully automated fashion, with human review. The tedious infrastructural work your best engineers never wanted to do? AI doesn’t mind. And your engineers get what they always wanted: to live and work in a codebase where those things are actually done.

The return on investment in engineering rigor has changed by orders of magnitude. The numerator went up. The denominator went down. What was once a hard-to-justify luxury is now the highest-ROI investment an engineering leader can make.

What AI-Ready Codebases Actually Look Like

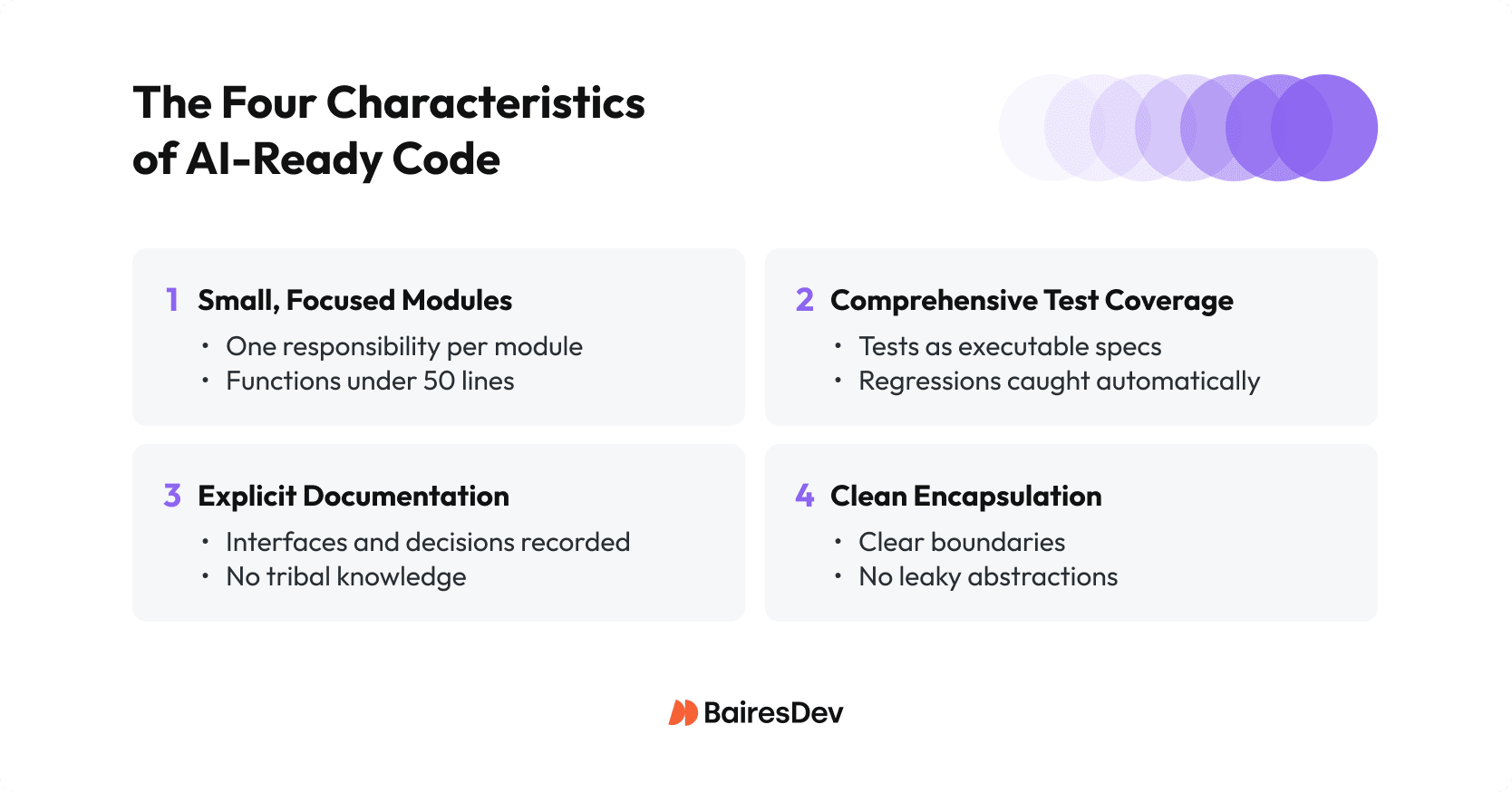

AI-ready codebases share four characteristics: small, focused modules; comprehensive test coverage; explicit documentation; and clean encapsulation. None of these are new principles. Every engineering leader already knows them. What’s changed is that a thorough, uncompromising application of them is now economically justified in a way it never was before.

Every one of these principles was always good for humans too. We just couldn’t afford to implement them fully, so we leaned on the crutch of humans being clever enough to figure things out anyway. AI doesn’t have that crutch.

Small, focused modules

Each module should do one thing and do it completely. Functions under 50 lines, files under 200. When an AI assistant can read your entire module without truncation, it reasons about it correctly. When a module requires understanding three other 5,000-line files to modify safely, AI will hallucinate the parts it can’t fit in context. No amount of model improvement changes this basic constraint.

Comprehensive test coverage

Tests are executable specifications that define what “correct” means in terms a machine can verify. Aim for comprehensive coverage of the interfaces and behaviors AI will be asked to modify. Tests serve as executable specifications. When AI generates code, tests validate it automatically. When AI refactors, tests catch regressions immediately. Without tests, you can’t trust AI output. With them, trust becomes routine.

Explicit documentation

Everything non-obvious should be written down: every interface, every architectural decision, every convention the codebase follows. Humans could navigate tribal knowledge, undocumented conventions, and implicit assumptions — eventually. AI simply can’t. But neither could your new hires, not quickly. The documentation you couldn’t justify writing has always been the documentation everyone needed.

Clean encapsulation

Components should have clear boundaries, explicit contracts, and no leaky abstractions. When AI modifies one component, it shouldn’t need to understand or accidentally break everything it touches.

None of this is new computer engineering. What’s new is that maintaining these standards is now nearly free, while ignoring them is prohibitively expensive.

The New Spec-First Workflow for Agentic Software Engineering

The workflow shift is as significant as the economic shift. It demands a fundamentally different approach to how engineering teams operate. In most organizations where AI is working at all, it’s working as sophisticated autocomplete. The developer thinks, types, and AI suggests completions. Human attention remains constant throughout. This delivers 15-30% speed gains; this is real value, but not transformation.

The teams getting 10x returns work differently. A developer spends their time on specification, meaning, requirements, edge cases, interfaces, acceptance criteria, invariants. The partner in that work is an AI agent that can read the codebase faster than any human, search across modules, understand existing patterns, and help make architectural decisions. The design work is interactive and collaborative, with AI as a thinking partner. Then AI autonomously generates implementation, writes tests, validates against specification. The developer reviews output at the level where human judgment matters, such as in architecture, business logic, or edge cases, and iterates until the work meets standards.

This is spec-first development. The specification is the work with roughly 80% planning, 20% review, and nearly zero manual coding. It only works when the codebase supports it. When it doesn’t work, that’s a failure of context. An AI agent dropped into a messy codebase does exactly what a new engineer would do. It makes its best guess about which patterns to follow, implements the feature as well as it can, and confidently tells you it’s done.

When the codebase has no clear organizing principle, no documented architecture, no consistent patterns, the best guess is often wrong. Against a clean codebase, one that is self-describing and self-enforcing of its own rules, AI doesn’t have to guess. The architecture itself becomes the instruction.

This is why the tech debt investment is non-negotiable. The new workflow requires making that investment and then maintaining near-zero tech debt as a continuous invariant. Think of it as eventually consistent. Every commit might introduce some small debt, but you’re continuously monitoring and paying it down rather than letting it accumulate. AI amplifies whatever it finds. If it finds debt, it compounds debt. If it finds clean patterns, clear rules, and specific instructions embedded in the codebase context, it will simply follow them.

What This Looks Like in Practice

Here’s a recent example. We needed to redesign an onboarding flow. Partner integrations had changed, new data points needed collecting, workflow steps had to be reordered, and the UX updated. A cross-cutting change touching the domain model, persistence layer, database migrations, API operations, and frontend components.

A cross-functional conversation between product, engineering, and design established what was changing at a high level. The engineer then took those notes into a working session with an AI coding agent with full codebase context. Over a few hours, they produced a detailed technical spec with every layer accounted for, edge cases identified, and work broken into precisely-scoped tasks with clear acceptance criteria.

That spec was detailed enough to be directly implementable and high-level enough for the full team to review before any code was written. That alignment step is where most traditional project risk disappears.

Writing a great spec isn’t new. But it used to be expensive enough that it mostly just didn’t get done. Now it’s cheap and it makes the work better for both the AI and the humans involved.

Implementation was largely automated from there. Each task was coded, tested, and submitted for review through AI-driven cycles. The engineer reviewed, flagged a small amount of rework, and released. A change that would have taken weeks was planned in hours and delivered in days.

How Software Engineering Roles Are Changing in the AI Era

If AI generates most of the code, what do engineers do? More than ever, but different work. Engineers spend more time defining what to build and less time manually building it. That concentrates human judgment exactly where it matters most.

What engineers do more of:

- Writing precise specifications: requirements, edge cases, acceptance criteria

- Making architectural decisions about boundaries, contracts, and tradeoffs

- Reviewing and validating AI output for correctness, security, and design quality

- Exercising judgment about what to build and why

What engineers do less of:

- Mechanical implementation: boilerplate, CRUD operations, scaffolding

- Repetitive test writing once patterns are established

- Syntax recall and API memorization

The core shift is that coding is becoming specifying. The ability to articulate precisely what you want in enough detail that AI can implement it correctly is becoming a primary technical skill. Most developers have spent careers turning vague requirements into working code through iteration and exploration. They never had to write specifications detailed enough for someone else to implement without questions, because they were the ones implementing.

This rewards the systems thinking, architectural judgment, and engineering rigor that senior engineers have spent careers developing. The developers who understand why code should be structured a certain way are more valuable than ever. The ones who only know how to type it are the ones at risk.

Why the Productivity Gap Between Teams Is Widening

The productivity gap between teams is widening because AI amplifies whatever it finds. Teams that invest their AI-generated capacity back into codebase quality enter a compounding flywheel. Teams that don’t get marginal gains at best and net negative output at worst.

AI gives any team that chooses to use it a surge of new engineering capacity. It will write a whole lot of code faster than you’ve ever seen it done before. The decisive question is what you do with that surplus.

The teams seeing 10x returns invest a portion of it in refactoring, testing, documentation, and architectural cleanup, all of which make AI progressively more effective. Those investments compound quickly. The more you improve the codebase, the more AI can do, which frees more capacity to improve the codebase further. Teams on this flywheel pull further ahead with every cycle.

I’ve seen this play out at both ends. With greenfield projects, we’ve set up zero tech debt as an automatically maintained invariant from day one: fully tested, modular, cleanly documented, with AI continuously encouraged to uphold those standards. Every new project is an opportunity to give your engineers the codebase they’ve always wished for.

With legacy codebases stretching back years, there’s no magic bullet and you do have to put the work in. But the scope shouldn’t be overwhelming. Go module by module, following the Boy Scout rule radically. Every time you touch a module to add a feature, first invest the hours or days to bring it up to standard. Then, implement the feature on the clean foundation, which is always faster than fighting the messy version. In both cases, individual engineer throughput of 5-10x is no exaggeration. The quality isn’t just maintained; it’s dramatically better. The throughput gains and the quality gains are two sides of the same coin.

If your team isn’t making this investment, they face the opposite dynamic. AI provides marginal assistance while developers struggle with the same maintenance burden. In the worst cases, AI is net negative for productivity, generating more bugs and tech debt than humans were able to produce unaided. Code volume goes up, but net positive output goes down. These are the teams rejecting AI and saying it doesn’t work.

The outcomes you get are a consequence of where you choose to invest. The teams seeing 10x returns made a deliberate decision to treat code quality as economic infrastructure, and they’re being rewarded for it faster than at any point in the history of software engineering.

Key Takeaways

- The difference between 10x AI returns and marginal gains isn’t the tools — it’s the codebase.

- AI-ready code is modular, well-tested, explicitly documented, and cleanly encapsulated — principles every engineering leader already knows but could rarely afford to enforce fully.

- Spec-first development shifts engineers from writing code to specifying it, concentrating human judgment where it matters most.

- AI amplifies whatever it finds: clean codebases compound into productivity flywheels; messy ones compound into debt.

- The investment in code quality has never had a higher ROI — and the window to act is now.