Executive Summary

Most enterprises run dozens of AI pilots but see little P&L impact. This article explains why AI value stays abstract at scale and why POCs rarely survive contact with production. It also covers what leadership decisions actually close the gap, including data foundations, operating models, governance, and a practical ROI playbook

AI is generating real impact across the industry, but for most large, non-digitally native enterprises, the value story is still measured in POCs, not P&L. The pilots proliferate. The bottom-line movement doesn’t.

As Chief Data and AI Officer at Carrier, I’ve spent years navigating exactly that gap. In my experience, realizing ROI from AI has little to do with the models themselves and everything to do with data foundations, operating models, change management, and leadership discipline.

In this piece, I’ll walk through why AI value stays abstract at scale, why most proofs of concept never graduate to production, and what leadership decisions actually move the needle. You’ll also walk away with a practical playbook you can apply now.

Why AI Value Stays Abstract at Scale

Most enterprises run dozens of AI pilots simultaneously, yet only a small minority see sustained, measurable bottom-line impact. Turning AI’s technical potential into tangible value inside complex, legacy-heavy environments is where organizations consistently struggle.

The Infrastructure Problem

First, data foundations are fragmented. In many companies you don’t “have an ERP”; you inherit tens of ERPs and legacy CRMs, homegrown MESs and other complex data estates spanning decades of technical debt. You can build a beautiful demo on an export. Converting that into resilient pipelines, harmonized semantics, lineage, and governance across that sprawl is where most initiatives stall, long before the model becomes relevant. The fragmentation goes beyond your own systems. vendors are compounding it.

Vendor-driven sprawl muddies the value story. Hyperscalers, software application providers, data platforms and SaaS vendors are all shipping their own copilots and agents. They bundle them into existing contracts and encourage on-platform experimentation at the compelling price of “free.” The orchestration layer is commoditizing, but the result is duplicative assistants across platforms, each with its own adoption curve, governance surface area, and cost line item. The pattern mirrors the BI and SaaS sprawl of previous technology cycles. The cleanup cost lands in the same place too.

The Organizational Problem

Third, most organizations live inside an inverted pyramid of AI investment. Roughly 70% of the work is people and process change, 20% is tooling, and maybe 10% is the models themselves. Budget and attention tend to flow in the opposite direction. We chase fine-tuning and model benchmarks while underfunding process redesign, change management, and incentives, which is exactly where most initiatives break down. Industry research is converging on this point: the majority of AI failures trace back to organizational and process issues, not model performance.

Finally, budget and talent constraints plus rapid ecosystem churn make continuity hard. AI is still treated as discretionary spend in many enterprises. Leaders turn over, macro headwinds appear, and contractor-heavy delivery models (80%+ at some firms) erode institutional memory. Tools that survived a six-month evaluation get challenged by the next model release three months later.

Each of these problems is solvable. The following sections walk through where solutions tend to break down first, what leadership needs to decide, and how to build the operating discipline that turns pilots into P&L impact.

Why AI Proofs of Concept Rarely Deliver Real ROI

The earliest breakdown point is almost always the proof of concept. POCs are necessary, but they are a terrible proxy for enterprise-scale value. Industry data bears this out: a large majority of AI pilots never make it to production, and 70–90% of initiatives fail to scale into recurring operations, according to research from MIT Sloan. My experience matches that almost perfectly.

POCs are designed to skip the hard parts. They typically run on static extracts, ignore data quality problems, and hand-wave over connectivity to upstream source systems and downstream applications. At scale, you need robust pipelines from source to semantic layer, with cataloging, quality checks, lineage, monitoring, and proper SRE/DevOps ownership. That’s where most “successful” POCs quietly die.

Change design is usually missing as well. During a POC, we rarely re-engineer processes, roles, and controls. We just prove the model can do something interesting. Post-POC, you still need to redesign permissions, approvals, training paths, and incentives so that people actually rely on the new workflow instead of treating it as a sidecar. Without that, usage and impact never escape a small pilot group.

Ownership and funding are equally fragile. Many AI pilots have no clear value owner, no FP&A-validated method for measuring impact, and no committed run budget for MLOps and support. My non-negotiable stance is simple: every new AI tool must come in with a business owner, a baseline, a value hypothesis, and a concrete method for measuring ROI after go-live. If that can’t be articulated at intake, we don’t start.

Finally, governance tends to arrive late and all at once. Security, compliance, and performance assessments show up at the eleventh hour, after stakeholders are already excited. In my organization, we formalized an AI Review Board (AIRB) with clearly defined risk measures across GRC, performance, and commercial ROI, and moved that to the top of the funnel. Ideas that can’t clear that bar early don’t enter the pipeline.

The Leadership Decisions That Unlock AI ROI

Most of what I’ve described above is solvable, but only if certain leadership-level decisions get made early and with teeth. They often don’t.

The first is value ownership and EPS linkage. I consider EBIT impact, validated by FP&A, the gold standard for AI value. When we set a 2026 AI value target of 200 million dollars, we translated that into roughly 23 cents of earnings per share. That single number clarified priorities across business units far more than any “number of pilots” metric ever could.

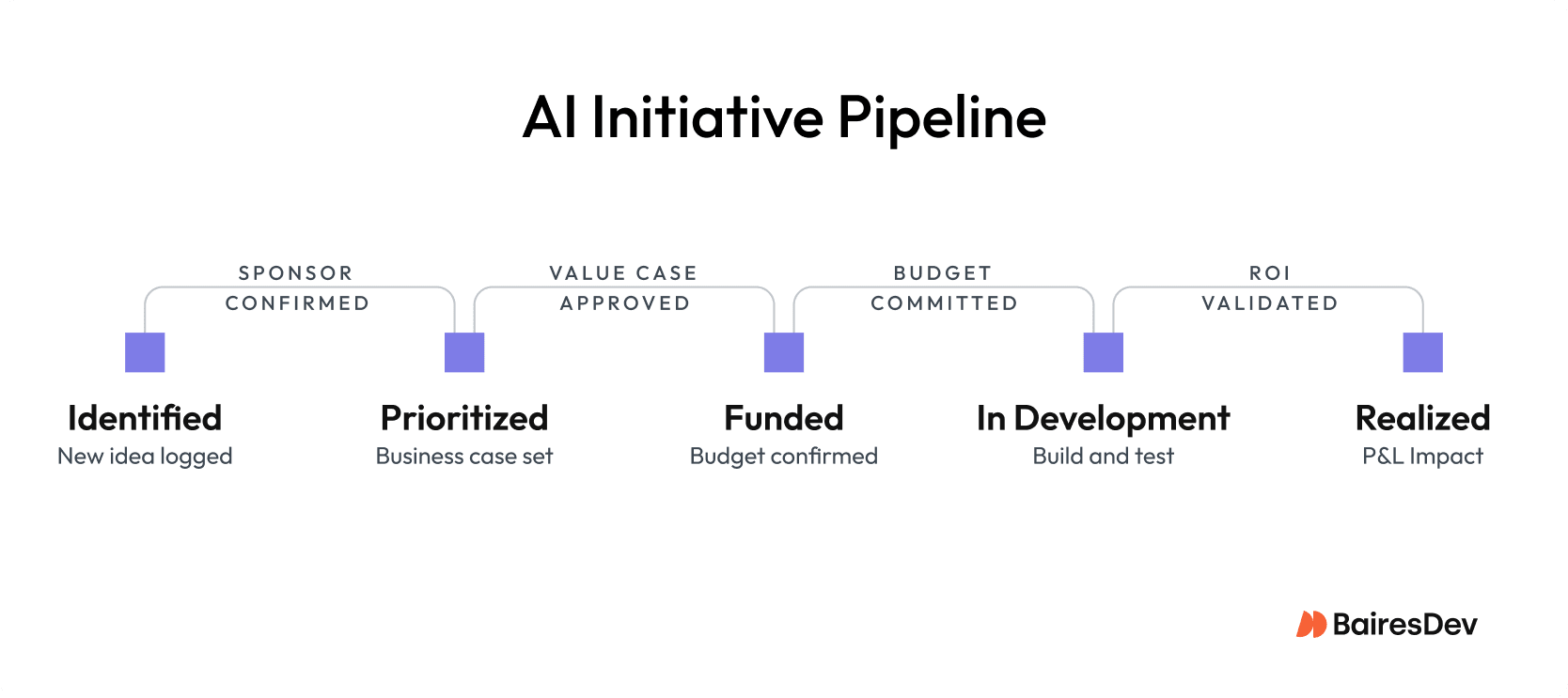

Next is the operating model. I’ve landed on a hub-and-spoke model: a strong central AI/ML COE plus cross-functional pods that own discovery → delivery → run. All work flows through a single intake and triage process that moves items from identified to prioritized to funded to in-development to realized, with explicit gates for sponsorship and value. That operating model is what prevents the roadmap from degenerating into a collection of interesting science projects.

Then there’s build–partner–buy and platform scope. Leaders need to be explicit about where each capability falls:

- Build and own capabilities that are strategic IP: pricing, forecasting, product analytics.

- Partner where system integrator accelerators can close the gap faster.

- Buy undifferentiated capabilities like AP/AR automation.

Without that clarity, you end up with overlapping tools that each deliver a slice of value but collectively dilute ROI.

Finally, leaders must treat change and incentives as first-class citizens. If you want 25,000 knowledge workers to adopt Copilot or Cursor, you can’t just “make it available.” Adoption and usage need to be tied to business-unit productivity goals, with training and support built into plans and progress reviewed monthly. AI tools should show up in performance dialogs alongside the rest of the business, not filed away in an IT dashboard.

4 Value Pools That Actually Move the P&L

In practice, I think about AI value as a pyramid of four pools: productivity, cost savings, growth, and product differentiation. We are moving up that pyramid over time.

1. Realized Productivity

Productivity is the broadest and noisiest layer. Roughly 95% of AI initiatives I see in the market are framed as productivity gains: faster coding, better document drafting, more efficient analysis. The catch is that most of this is “notional” value unless you change staffing plans, throughput targets, or customer SLAs. We apply risk-adjusted factors and insist on explicit mechanisms: did we avoid an incremental hire, increase throughput with the same headcount, or meaningfully improve NPS? If not, the productivity gain is interesting but not yet real.

2. Non-People Cost Savings

Non-people cost savings are clearer to measure and easier to defend. One of our most compelling examples is warranty claims. By using an AI agent to enforce policy and catch out-of-policy reimbursements, we unlocked on the order of $25 million in avoided spend. That is direct P&L impact with clear traceability and limited debate.

3. Growth

Growth is the pool where CEOs lean in. Take demand forecasting down to SKU level, or generative assistants that optimize building energy and space utilization. In both cases, AI is directly influencing revenue or margin. These are harder to design and validate, but easier to defend in front of a board once the models have a track record.

4. Product Differentiation

Product differentiation is the smallest pool today but the one with the highest moat. Embedding AI into the product itself, whether that is an intelligent service layer, adaptive pricing, or new data-driven features, creates value that competitors can’t easily replicate with off-the-shelf tools. This is where IP, data assets, and model strategy intersect.

Design Principles That Make ROI Real

What follows are the principles we’ve built and stress-tested at Carrier. They’re not a theoretical framework, but the actual operating decisions that shape how we run AI at scale.

Team Structure

At my company, we run a central COE plus pods that own discovery through run, with weekly sprints and a shared backlog that typically holds 180-plus items. We target something like 50 POCs a year but apply strict gating. If there’s no sponsorship, funding, or value case, there’s no build. A dedicated Value Office partnered with FP&A enforces standardized definitions (cost savings vs. cost avoidance), risk-adjusted multipliers, and NPV calculations for multi-year benefits, and surfaces all of this in board-level dashboards.

Technology

On model strategy, we maintain a model-garden posture (OpenAI, Anthropic, Gemini, and others). We prefer RAG patterns over aggressive fine-tuning, and match models to tasks by their strengths — Anthropic for linguistically intense legal work, Gemini for multimodal scenarios.

On data architecture, the layer is organized around producer/consumer-aligned data products, with a strong catalog and quality stack and selective real-time streaming where it actually matters. The goal is a harmonized semantic layer that can be reused across forecasting, pricing, and service rather than one-off pipelines per use case.

Governance

Our AI review board is a cross-functional governance committee with voting members from InfoSec, Compliance, Legal, and Audit. It operates as an enablement function that has pre-authorized dozens of tools and features with documented risk assessments, so teams can move quickly within those lanes. Security, compliance, performance, and commercial value evaluations run in a single pass to minimize overhead while raising the quality bar.

Leadership and Funding

AI impact is one of only a couple of digital metrics our board tracks regularly. We blend central platform funding with BU project dollars, ring-fence must-run spend (like cybersecurity and ERP), and insist on ROI at intake for discretionary AI spend. We have said no to seven-figure SaaS uplifts when we could achieve equivalent capability by composing what we already own via APIs and our no/low-code stack.

A Practical Playbook for Realizing AI ROI

The principles above describe how we’ve structured AI at Carrier. Translating that into something actionable for your own organization starts with a shorter list of decisions. These are the practices I’d put in front of any leader accountable for AI ROI today.

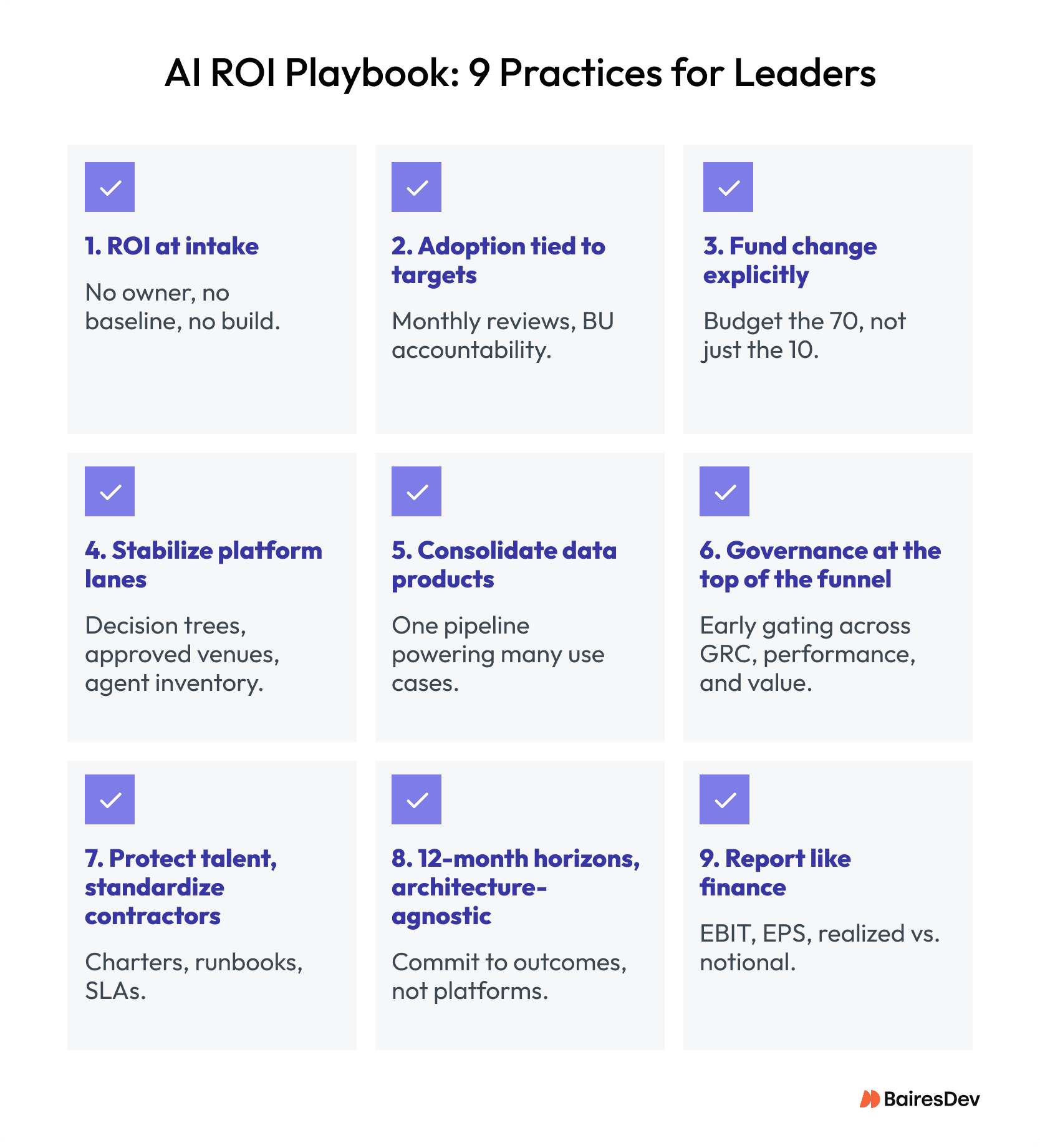

- Require ROI at intake. Every AI initiative must have a named business owner, a baseline, a value hypothesis, and a concrete measurement method before work begins.

- Tie adoption to targets. Assign tool-specific productivity, cost, or growth outcomes to business unit leaders and review progress monthly.

- Fund change explicitly. Budget for process redesign, training, and role changes up front. This is the “70” in the 70/20/10 equation.

- Stabilize platform lanes. Publish no-code/low-code/pro-code decision trees, limit agent creation to approved venues, and monitor your agent inventory actively.

- Consolidate data products. Prioritize data products that can power multiple top-line use cases — forecasting that, for example, also supports pricing and service.

- Move governance to the top of the funnel. Keep your AI review gating early and expand it to cover performance and commercial value alongside GRC in one integrated process.

- Protect scarce talent, standardize contractors. Define pod charters, runbooks, and SLAs so that an 80% contractor model doesn’t erode your velocity or quality.

- Plan in 12-month horizons and stay architecture-agnostic. Commit to outcomes, not platforms. Build so you can swap models and components without rebuilding everything when the next release cycle hits.

- Report to the board like Finance. Anchor everything in EBIT and EPS, distinguish realized from notional value, apply risk-adjusted factors, and surface a small set of growth and differentiation wins every quarter.

If we do those things consistently, AI stops being a slide in the strategy deck and starts behaving like any other capital-intensive capability: one that earns its way into the P&L, quarter after quarter.

ROI Starts Here

The gap between AI activity and AI value is not closing as fast as the headlines suggest. If you work in legacy enterprises, you may still be running the same playbook: more pilots, more tools, more vendor conversations, and roughly the same P&L impact as two years ago.

That doesn’t have to be the trajectory. Closing the gap requires treating AI like the operational discipline it actually is. The data foundations, operating models, governance structures, and leadership decisions covered in this piece are fundamental work.

Key Takeaways

- AI ROI fails at scale due to data fragmentation and weak operating discipline, not model limitations.

- POCs succeed in isolation but break in production where data, workflows, and governance matter.

- No owner, no baseline, no ROI—value must be defined at intake.

- Leadership alignment on EBIT/EPS, operating model, and incentives determines impact.

- Productivity only counts when converted into measurable cost savings, growth, or differentiation.