For many engineering teams, the past year has been about testing AI’s potential. Code generation tools improved and internal experiments showed gains in productivity. But as organizations try to move beyond pilots, a new reality is setting in. The current challenge is whether teams can adapt how they build, validate, and ship software around AI. This shift is proving harder than expected.

That tension was at the center of our recent webinar, Cloud & Development Trends 2026: From Cloud Native to AI-First Engineering. Chris Haire, CTO at VSCO and BairesDev Fellow, moderated a conversation with Jason Davenport, Area Tech Lead for Developer Experience and Engineering at Google, Ravi Bellur, Founder at Procession Partners, and Saurabh Gupta, CTO at Credit One Bank. Together, they explored what it actually takes to move from cloud-native systems to AI-first engineering in production environments.

In this article, we’ll break down the webinar’s key insights on how AI is reshaping the way software is built. We’ll look at how the role of the engineer is changing and where most organizations get stuck when scaling beyond pilots. We’ll also explore what it takes to operationalize AI with clear accountability, governance, and trust.

AI-First Engineering Is an Operating Model Shift

One of the clearest themes across the conversation is that AI-first engineering is being misunderstood at a structural level.

Most teams approach it as an upgrade: new tools, better outputs, and faster delivery. But that framing starts to show its limits in practice. As organizations start to move beyond experimentation, they run into a different but eerily familiar friction. The systems around the work haven’t changed.

Chris Haire set the tone early by reframing the shift. AI is no longer something layered onto applications. It’s becoming embedded in how software is built and how systems operate. That distinction matters. Layered technologies can fit into existing workflows, but embedded ones force those workflows to change.

What makes this shift difficult is the assumptions most organizations are built on. For the past decade, cloud-native architectures defined how teams build and scale software. AI is now challenging those assumptions.

Jason described this as a move away from isolated tools toward integration across the entire development lifecycle. The early focus on AI-assisted code generation is already giving way to something broader. AI is supporting system design, navigating large codebases, assisting with testing, and even shaping incident response. “It’s about integrating AI into the workflow itself,” Jason explained.

That shift turns AI into infrastructure and that’s where most organizations stall. Ravi Bellur pointed out that many teams are still inserting AI into existing processes without rethinking how work actually moves, leading to more output, but the same bottlenecks. “It’s not just about deploying models,” he said, “It’s about changing how decisions are made, how teams are structured, and how work is validated.”

AI-first engineering reshapes how work moves. Decision-making, validation, and coordination all shift with it. Without that change, the organization becomes the constraint.

The Role of the Engineer Is Being Rewritten

AI-first engineering also changes what engineers actually do and that shift is visible in how work is structured. Engineers spend less time writing code line by line and more time on defining intent, setting constraints, and guiding systems that generate output at scale.

Jason Davenport explained this as a move toward orchestration. Engineers are shaping how solutions are produced. The work becomes more about direction. This apparent productivity gain introduces a new tension. As output increases, understanding does not scale at the same rate.

Engineers are now reviewing, validating, and making decisions on code they didn’t write. That changes the nature of ownership. Saurabh Gupta grounded that shift in more constrained environments, he said, “Even if AI is involved in generating outputs, there is always a clearly defined owner for the final decision.” Ownership becomes more explicit as output increases.

Without a deep understanding of the system, it becomes difficult to assess whether what the AI produces is correct or aligned with intent. Jason Davenport put it more directly. “It’s less about having written every line of code yourself, and more about whether you understand what was produced and whether you can stand behind it.”

Work that once felt junior becomes more context-heavy, and decision-making moves closer to the individual engineer. The gap between producing output and validating it becomes a defining constraint of the workflow.

Ravi Bellur framed this from an organizational angle. He explained that when teams adopt AI without adjusting how work is validated, the pressure shifts silently to individuals. Engineers are expected to absorb more responsibility, often without the systems or context to support it.

In an AI-driven workflow, correctness is no longer guaranteed by the act of writing the code. It depends on the quality of the judgment applied. That’s what makes this shift different from previous productivity gains. AI doesn’t reduce the need for engineering expertise, like many assume. It concentrates it in fewer, more critical decisions.

Most Teams Are Stuck Between Pilots and Production

Across the conversation, there was little disagreement about where most organizations stand today. That reality showed up in the audience as well. During a live poll, most respondents placed themselves in exploration or pilot stages, with only a smaller portion scaling AI into production. The pattern wasn’t surprising. It mirrored exactly what the panel described.

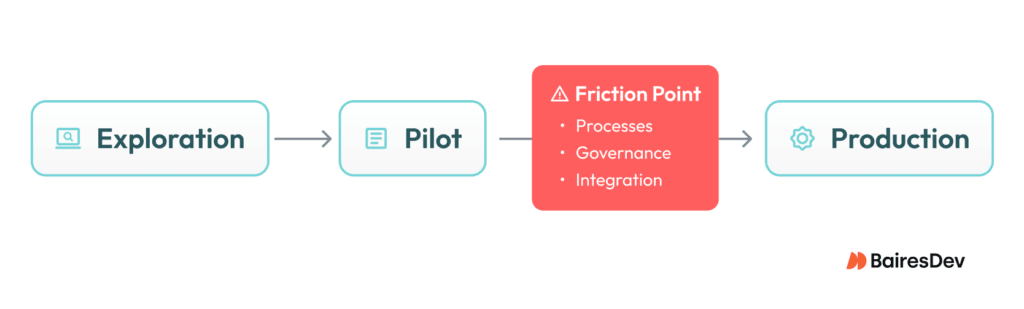

As discussed in the panel, pilots are producing results in isolation. The challenge begins when teams try to scale them. Jason pointed to this transition directly. “The challenge is making the transition from pilots to production. That’s where things tend to slow down.” What’s notable is where that slowdown comes from. “It’s usually not because of the technology itself, but because of everything around it — processes, governance, integration.”

That friction becomes visible as teams extend early wins. What works in isolation proves insufficient under real operating conditions. Output increases, but review cycles, validation practices, and ownership structures don’t catch up. The system starts to absorb more than it was designed to handle.

In regulated environments, that pressure is even more visible.

Saurabh Gupta emphasized that moving into production requires a level of rigor many organizations underestimate. Systems don’t move forward without validation, risk assessment, and compliance review. “Before something can move into production, it needs to go through a series of checks,” he explained.

Data readiness, evaluation practices, governance models, and cross-team coordination all start to matter at once. The bottleneck doesn’t sit in one place; it shows up across the operating model. That’s why scaling AI depends on adapting the environment it runs in.

Without that shift, progress slows, fragments, and eventually stalls.

Accountability, Governance, and Trust Define What Works in Production

As teams move from experimentation into production, control is the limiting factor. AI systems generate more output, introduce more variability, and operate across more parts of the system than traditional software. That makes validation, ownership, and oversight more critical, not less.

Saurabh Gupta approached this from the perspective of operating in regulated environments, where those constraints are already built into the system. His point was simple, but difficult to operationalize. “Even if AI is involved in generating outputs, there is always a clearly defined owner for the final decision.”

That clarity becomes essential as systems become more complex. When outputs are no longer fully deterministic, teams can’t rely on process alone to guarantee correctness. They need to understand how decisions are made, where responsibility sits, and how to trace outcomes back to their source. That’s where governance moves from policy to practice.

Jason Davenport emphasized the role of continuous evaluation in that shift. AI systems don’t behave the same way every time, which means teams need to actively monitor performance, detect degradation, and refine outputs over time. Validation becomes continuous.

In more constrained environments, that same idea takes a more structured form. Saurabh pointed out that systems don’t move into production without scrutiny. That scrutiny exists to ensure that decisions can be explained, audited, and defended when needed. That requirement doesn’t only apply to regulated industries.

As AI becomes more embedded in core workflows, every organization faces a version of the same question. Not just whether the system works, but whether its outputs can be trusted. And that trust is built through clarity in ownership of decisions, visibility into how outputs are produced, and clear processes for evaluating and improving system behavior over time.

Without those elements, scaling AI introduces risk faster than it creates value.

The Shift Is Underway

The throughline across this conversation is that AI-first engineering is not constrained by model capability or tooling maturity. The friction shows up in how organizations operate around it. Workflows that don’t adapt, ownership that isn’t clearly defined, and validation practices that don’t scale become the limiting factors.

What the panel made clear is that this shift requires organizations to rethink how work moves, how decisions are made, and how accountability is enforced.

Watch the full webinar, including the audience Q&A where the panelists go deeper on scaling AI in production, evolving engineering roles, and the governance structures required to support them. If you’re ready to scale AI in your organization, reach out to our team. We’re ready to help you move forward.